OpenAI Codex Background Agents: Enterprise Power in 2026

OpenAI has expanded Codex into a more agentic, enterprise-ready assistant that can run in the background on users’ machines, interact with local and web apps, and integrate with third-party tools. These changes shift Codex from a reactive coding helper into a proactive collaborator that can carry out auxiliary tasks, parallel workflows and clerical automation while developers stay focused on core work.

What are OpenAI Codex background agents?

Background agents are autonomous processes powered by Codex that operate on a user’s desktop without interrupting active work. They click, type, open applications and interact with web pages on behalf of users. Unlike traditional single-session assistants, these agents can run multiple parallel tasks on a Mac or PC and coordinate activities across apps and services—enabling a new class of background automation for development teams and product designers.

Key capabilities

- Local desktop control: Agents can open and manipulate native apps, file systems and development environments.

- Parallel tasking: Multiple agents run concurrently, performing supporting work without blocking the user’s active workflows.

- In-app browsing: Agents can navigate and interact with web interfaces to complete front-end tests, data entry or QA steps.

- Memory and context: A preview memory feature stores past session context so agents can recall project specifics and user preferences.

- Plugin integrations: Codex connects with third-party tools and task systems to read and write issues, pull requests and calendar items.

How can teams use background agents?

Background agents are designed to be task-focused helpers that free engineers and product staff from repetitive chores. Practical use cases include:

- Frontend QA and regression testing: Agents can load web pages, run test scenarios and capture screenshots while developers continue coding.

- Automated app validation: For apps without public APIs, agents can exercise UI paths to validate behavior, gather logs and report errors.

- Clerical orchestration: Agents consolidate Slack threads, calendar events and issue trackers into daily to-do lists or summaries.

- Prototype asset generation: Codex’s new image-generation abilities can produce slide visuals, mockups and product concepts on demand.

- Parallel development tasks: While a developer implements features, agents can create test fixtures, update documentation and submit minor PRs.

These patterns transform Codex from a prompt-driven coding assistant to an ambient collaborator that augments team velocity.

What can Codex background agents do? (featured snippet)

Codex background agents can operate desktop applications, interact with web interfaces, run multiple parallel tasks, recall prior session details through a memory feature, and use plugin integrations to manage work across tools like issue trackers and chat platforms.

Why this matters for enterprise adoption

Enterprises prioritize tools that reduce context switching, enforce repeatable processes and improve compliance. Background agents align with these needs by:

- Reducing interruptions: Teams can offload routine operations to agents and retain focus on high-impact work.

- Standardizing tasks: Agents follow scripted procedures consistently, improving reproducibility across environments.

- Integrating with toolchains: Plugin support connects Codex to existing developer workflows, making adoption incremental rather than disruptive.

- Scaling assistance: Parallel agents let organizations distribute low-touch automation across many projects simultaneously.

For organizations evaluating agentic AI, this approach provides a path from pilot to production by addressing day-to-day productivity gains and measurable ROI.

How does Codex compare to other agentic coding tools?

Agentic coding tools have converged on similar capabilities—remote desktop control, parallel tasking and integrations—but they vary in implementation details such as security, latency and ecosystem support. Some competing systems focused earlier on remote desktop automation and agent orchestration; OpenAI’s updates demonstrate a strategic push to bring Codex to parity while emphasizing enterprise-ready features like plugin connectivity and memory persistence.

For readers tracking agent design and rollout strategies, compare this move to broader conversations about controlled model releases and feature gating in the industry. Additional context about agent SDKs and secure agentic deployments can be found in our coverage of Agents SDK Enhancements: Secure Agentic AI for Enterprises and the architecture patterns described in Enterprise AI Agents: An Agentic AI Operating System.

Security and governance considerations

Bringing autonomous agents onto corporate desktops raises several security, privacy and governance questions that teams must address before broad deployment:

Access control and least privilege

Agents should run with strictly scoped permissions. Enterprises must limit what an agent can access on disk, which applications it may control, and what external services it can call.

Auditability and provenance

Every agent action needs an audit trail: who initiated it, what commands were executed, and which artifacts were changed. This enables traceability for debugging and compliance.

Data handling and memory retention

The memory feature improves continuity but also increases data exposure risk. Organizations must be able to configure retention policies, redact sensitive context and opt-out of persistent storage where necessary.

Approval and human-in-the-loop checkpoints

High-impact actions—merging code, deploying to production, or modifying access controls—should require explicit human approval. Agents can be useful for draft work and prep, but final decisions should remain with authorized personnel.

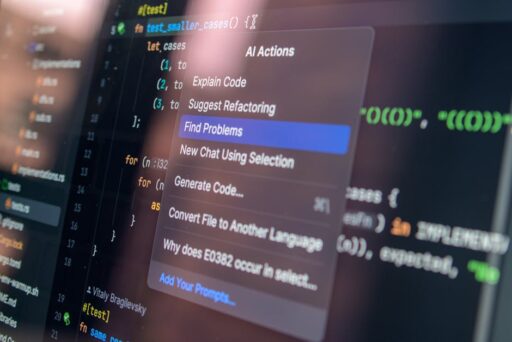

Developer experience: tooling and integrations

Codex’s plugin ecosystem and in-app browser aim to bridge gaps between LLM capabilities and real-world developer workflows. Examples of integration benefits include:

- Automated issue triage: Agents can read issues from trackers and suggest labels or assign owners.

- Calendar-aware task generation: By examining calendar events and messages, agents can prioritize a developer’s day.

- Local environment orchestration: Agents can prepare dev environments, run scaffolding scripts and seed test data.

These integrations reduce the friction of context switching and make it easier to incorporate AI assistance without changing existing platforms. For more on how agents can be embedded into workflows, see our piece on AI Agent Workflows.

Practical rollout checklist for engineering leaders

To adopt Codex background agents safely and effectively, engineering leaders should follow a staged approach:

- Define low-risk pilot tasks (e.g., screenshot-based QA, documentation updates).

- Establish permission boundaries and sandbox execution environments.

- Enable audit logging and explicit approval gates for critical actions.

- Test memory retention policies and implement data redaction workflows.

- Measure developer time saved and error rate impact to build a business case for expansion.

This sequence balances rapid experimentation with operational safety.

Pricing and procurement implications

OpenAI’s announcement includes a pay-as-you-go option for enterprise and business customers, giving teams flexible procurement choices. Variable pricing models can lower the barrier for trial and allow organizations to scale spending with usage—especially important for background agents that may run many small tasks continuously.

Procurement teams should evaluate:

- Cost per agent-hour vs. productivity gains

- Bundled plugin or integration fees

- Data retention and storage charges for memory features

- Support and SLA terms for enterprise deployments

Risks and open questions

Despite clear productivity upside, several open questions remain:

- How will enterprises prove compliance when agents interact with regulated data?

- What controls are necessary to prevent inadvertent data exfiltration via agent-driven browsing or plugin access?

- How will teams detect and mitigate agent errors that propagate into CI/CD or production systems?

Addressing these concerns will determine whether background agents become broadly accepted in regulated industries like finance, healthcare and defense.

Five best practices to get started

- Start with read-only tasks to evaluate behavior and audit logs.

- Use ephemeral, sandboxed environments for agent testing.

- Limit plugins to a vetted allowlist and require OAuth scopes per app.

- Set explicit memory retention windows and periodic reviews of stored context.

- Train staff on agent failure modes and establish rollback procedures.

Looking ahead: what this means for the AI developer ecosystem

Background agents represent a maturation of agentic AI: tools are moving from isolated assistants to integrated collaborators that participate in ongoing workflows. This transition will accelerate the adoption of agentic patterns across tooling, from IDEs to CI systems and product management platforms.

As vendors iterate, expect greater emphasis on secure SDKs for agent orchestration, richer integration marketplaces and tighter governance primitives. For readers interested in the broader evolution of AI-enabled agents, our coverage of secure agent SDKs and enterprise architectures offers deeper technical perspective: Agents SDK Enhancements and Enterprise AI Agents.

Conclusion

OpenAI’s move to give Codex background agents, an in-app browser, memory and expanded plugin support signals a clear push toward enterprise-first, agentic workflows. The combination of local automation, integrated tool access and context-aware memory can materially reduce repetitive work and improve developer productivity—if organizations adopt appropriate governance, auditing and permissioning controls.

For engineering leaders, the practical next steps are to pilot low-risk automation, instrument thorough logging, and validate ROI before scaling. With careful rollout, background agents can become reliable teammates that handle routine tasks while humans focus on design, architecture and innovation.

Call to action

Interested in implementing agentic assistance in your engineering org? Subscribe to Artificial Intel News for in-depth guides, or contact our analyst team to evaluate pilot strategies and governance frameworks tailored to your stack.