Measuring AI Developer Productivity: Metrics That Matter

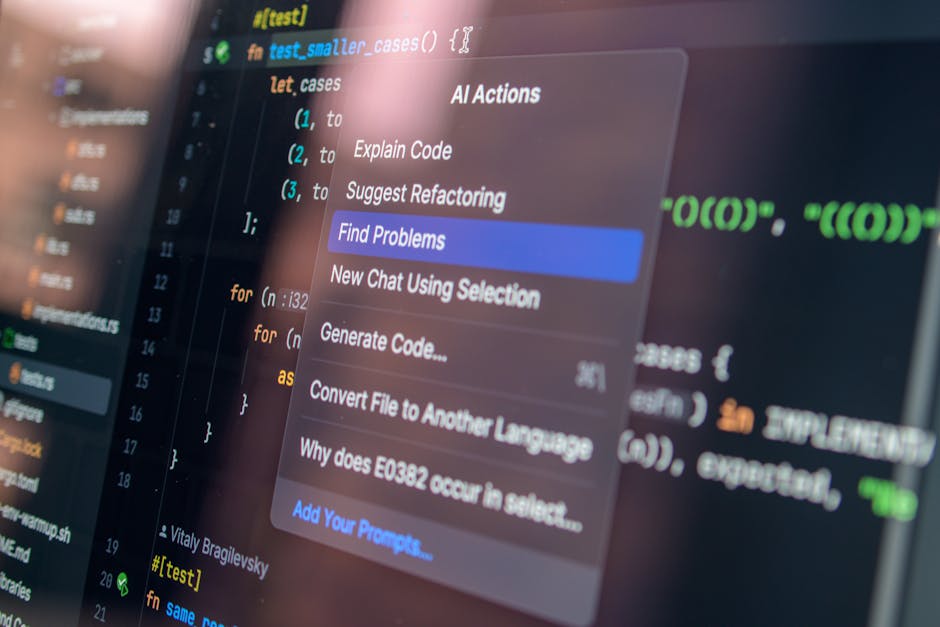

As AI coding agents reshape software development, engineering leaders face a new measurement challenge: how do you evaluate productivity when tools generate large volumes of code? Traditional metrics — lines of code, raw commit counts, or token budgets — can be misleading. To drive efficiency and quality, teams must shift from counting inputs to measuring outputs that map to real value.

Why many traditional metrics break down with AI-assisted coding

There’s a management adage: what you measure is what you get. When measurement frameworks were built around human-only workflows, they focused on developer-centric inputs (hours worked, commits made, lines of code). AI agents change the input/output relationship in two important ways:

- Agents generate volume quickly: AI can produce large amounts of code, suggestions, and boilerplate, inflating superficial productivity metrics.

- Generated code often requires human review and iteration: initial acceptance rates can overstate long-term retention because generated code may need rewrites, refactoring, or bug fixes later.

Measuring an input (how many tokens consumed, how many suggestions were produced) is not the same as measuring value (how much stable, correct, and maintainable code ships to customers). Teams that continue to reward input risk encouraging behaviors that increase short-term throughput but add long-term technical debt.

What are the best metrics to measure AI developer productivity?

Engineering managers should define a balanced metrics set that captures speed, quality, and cost. The following metrics can be combined into a dashboard to assess the true impact of AI coding tools.

1. Net Delivered Value (NDV)

Definition: the business value of shipped changes after accounting for rework and regressions.

How to calculate: assign business value or priority score to work items, then subtract estimated cost of rework (time, severity of bugs) attributable to that work over a defined window (e.g., 30–90 days).

2. True Acceptance Rate (TAR)

Definition: the percentage of AI-suggested code that remains unchanged and in production after a predetermined review period.

Why it matters: initial code acceptance may be high, but revisions in the following weeks reveal the true retention of generated contributions.

3. Code Churn Adjusted Throughput

Definition: throughput (merged PRs or story completions) weighted by churn (lines deleted or rewritten within a window).

Formula example: adjusted_throughput = merged_prs * (1 – churn_ratio). This penalizes high-volume, low-stability output.

4. Review Overhead and QA Load

Definition: additional reviewer time and QA cycles required per AI-generated contribution versus human-authored work.

Why it matters: if AI increases reviewer hours or CI cycles disproportionately, the net productivity gain may be negative.

5. Cost per Delivered Unit

Definition: total cost (compute tokens, agent licenses, developer review time) divided by stable delivered units (stories, user-facing features, bug fixes).

This ties AI consumption to economic ROI so you can compare token-heavy workflows against alternatives.

How should teams instrument AI coding agents to collect meaningful data?

Observation is the first step to improvement. The agent ecosystem can emit rich metadata — which must be captured, normalized, and correlated with downstream outcomes.

- Track provenance: record whether a change originated from an AI suggestion, a human author, or a hybrid workflow.

- Log metadata: prompt context, token/compute usage, model version, time to first acceptance, and the reviewer identity.

- Correlate with outcomes: link generated suggestions to later defects, rework, or customer-reported issues within a 30–90 day window.

Collecting this metadata enables the calculation of the metrics above (TAR, churn-adjusted throughput, cost per delivered unit) and helps identify which prompts, models, or workflows produce durable value.

What patterns are emerging from engineering analytics?

Across organizations adopting AI coding tools, several consistent patterns appear:

- Increased volume: teams report more PRs and a higher rate of code generation, especially for routine scaffolding and boilerplate.

- Higher early acceptance: many AI suggestions are initially accepted by engineers, particularly by less experienced developers.

- Elevated churn: a notable share of generated code is revised or deleted in subsequent weeks, which erodes the apparent productivity gains.

- Rising review burden: code reviews and QA cycles can grow as reviewers verify generated logic, dependencies, and security implications.

These signals suggest that AI boosts velocity, but velocity without durability may simply shift effort downstream into review and maintenance. To convert volume into value, teams need to measure both short- and medium-term outcomes.

How can engineering managers diagnose problem areas?

Use targeted diagnostics to find where AI is adding value or noise. Ask:

- Which repositories and teams have the highest churn-to-volume ratio?

- Do junior engineers accept more AI suggestions than seniors, and does that correlate with higher rewrite rates?

- Are certain prompt types or model versions consistently producing lower-quality output?

Pair these questions with exploratory queries in your analytics platform to prioritize interventions (training, guardrails, or adjusted workflows).

How should teams adapt workflows to capture the benefits of AI without increasing debt?

Adoption strategies that worked for other disruptive developer technologies apply here, with some agent-specific adjustments:

Practical steps

- Start with low-risk tasks: use agents for tests, docs, and scaffolding where cost of error is low.

- Define a staging window: require human review before merging AI-generated changes into main branches, with post-merge monitoring for a defined period.

- Measure and iterate: pilot dashboards for TAR, churn-adjusted throughput, and cost per delivered unit; iterate rules and prompts based on data.

- Invest in training: upskill reviewers and junior engineers on prompt engineering, security checks, and expected review depth.

- Enforce guardrails: CI checks, linters, and automated tests should run on AI-generated patches before human review.

What tooling and analytics should you integrate?

To implement the metrics above, combine three layers:

- Agent telemetry: capture model, prompt, and token usage.

- Code lifecycle data: PRs, merges, issue links, test results, and rollback events.

- Business mapping: link code changes to customer-facing metrics or milestone delivery.

Many engineering analytics platforms now support AI-specific metadata ingestion. Pairing those capabilities with robust automated validation (see our coverage of Automated Code Validation) and code verification pipelines can reduce downstream churn and surface the highest-value use cases.

How do senior and junior engineers differ when working with AI agents?

Experience level matters. Senior engineers typically treat agents as augmentation: they prompt more deliberately, validate assumptions, and refactor aggressively. Junior engineers often accept suggestions faster, which can increase throughput but also leads to more rewriting later. Managers should tailor guardrails, code review depth, and mentorship based on experience to avoid concentrating technical debt where it is least visible.

How long before you see reliable ROI from AI coding tools?

ROI timelines vary, but expect an initial experimentation phase of 3–6 months followed by a stabilization period where processes and dashboards are tuned. Early wins appear fastest in auxiliary work (tests, docs, formatting). Converting agent use into consistent product velocity typically requires instrumented feedback loops and governance that address churn and quality.

Checklist: implement a measurement program

- Define success: pick 3–5 outcome metrics (NDV, TAR, churn-adjusted throughput, cost per unit).

- Instrument telemetry: collect provenance, token usage, model versions, and prompt context.

- Correlate outcomes: link generated contributions to defects, rework, and customer impact.

- Deploy controls: CI checks, staged merges, and review SLAs for AI-generated code.

- Iterate: use data to optimize prompts, workflows, and where agents should be applied.

If you’re building out this program, also check prior coverage on integrating AI into engineering workflows and verification: AI Code Verification: Next Phase of Software Development and AI Auto Mode for Coding: Safer Autonomous Developer Tools for deeper guidance.

How do you present these findings to executives?

Executives care about outcomes: time-to-market, defect rates, and cost efficiency. Translate technical metrics into business terms:

- Show how NDV maps to feature velocity and customer impact.

- Quantify additional QA/review costs attributed to AI adoption.

- Recommend incremental investments where data shows durable gains (e.g., test generation, secure scaffolding).

Contextualize token and compute expenditures as investments tied to measurable changes in delivered value, not as line-items to be optimized in isolation.

Final thoughts: measure outcomes, not just inputs

AI coding agents are transforming how software gets written, but raw volume is not the same as durable productivity. Engineering leaders who combine telemetry, thoughtful metrics, and governance can harness AI to raise sustainable value while controlling long-term risk. Start with pilots, instrument heavily, and make decisions based on retention and business impact rather than token counts alone.

Next steps

Begin by instrumenting one team or repository with provenance tracking and an initial dashboard for TAR, churn-adjusted throughput, and cost per delivered unit. Run a 12-week pilot, iterate prompts and guardrails, and bring findings into a cross-functional review with product and QA.

Want to dive deeper? Read our related guides on Automated Code Validation, AI Code Verification, and AI Auto Mode for Coding to build a complete analytics and safety plan.

Call to action

If you lead an engineering org and are adopting AI coding agents, start a measurement sprint today: instrument provenance, baseline your churn and acceptance rates, and run a 12-week pilot. If you’d like a checklist template or sample dashboard, subscribe to our newsletter or contact our editorial team for a downloadable starter pack.