Automated Code Validation: Taming AI-Generated Code

The rapid rise of AI-assisted development—often called “vibe coding”—has dramatically increased the volume of code flowing into codebases. While generation speeds up feature prototyping, it also creates a new pain point for engineering teams: code overload. AI-produced code often requires careful verification, additional tests, and more CI diagnostics before it can be trusted for production. Automated code validation has emerged as a practical, scalable response: using AI-driven agents to validate, test, and orchestrate CI workflows so teams can ship safer and faster.

What is automated code validation and why does it matter?

Automated code validation is the practice of using automated systems—often powered by AI agents—to verify that new or modified code meets quality, security, and compatibility requirements before it is merged into production branches. Validation sits between code generation and release, serving as the gatekeeper that converts output into trustworthy deliverables.

Key reasons it matters:

- Volume management: AI-assisted development increases the amount of code changes to review. Automated validation scales review efforts so teams aren’t overwhelmed.

- Fewer regressions: Continuous validation reduces the chance that generated code introduces bugs or breaks integrations.

- Faster feedback loops: Developers receive actionable diagnostics sooner, keeping iteration velocity high without sacrificing quality.

- Security and compliance: Automated checks can catch insecure patterns, licensing issues, or policy violations before code is merged.

How do validation agents work?

Validation agents are specialized software components that orchestrate code-quality tasks automatically. They act as autonomous or semi-autonomous reviewers that run tests, analyze diffs, cross-check dependencies, and escalate exceptions to humans when necessary.

1. Orchestrating CI and tests

Validation agents can integrate with continuous integration systems to run targeted test suites, static analysis, linting, and end-to-end checks on generated code. Instead of triggering full CI cycles for every small change, agents can determine which tests are required based on code context and dependency graph, reducing wasted cycles and accelerating feedback.

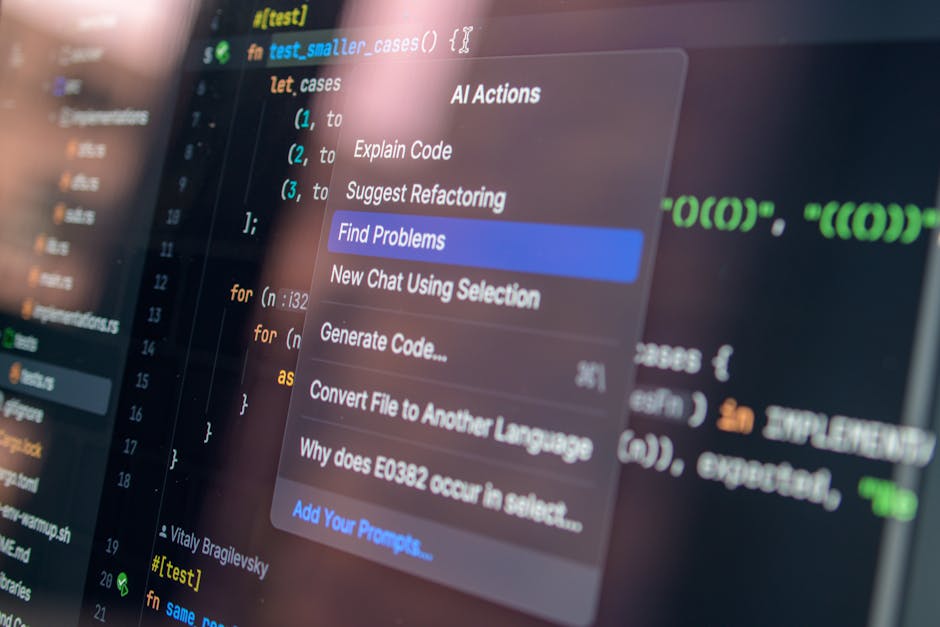

2. Dynamic code review and diagnostics

Rather than replacing human reviewers, validation agents provide diagnostic scaffolding: they annotate diffs with likely failure modes, produce prioritized issue lists, and propose fixes. This triage reduces the cognitive load on senior engineers, who can focus on architectural and design decisions.

3. Security and maintenance automation

Agents can run automated security scans, dependency vulnerability checks, and license compliance audits. They can also schedule maintenance tasks, such as dependency upgrades and refactor suggestions, and validate that those changes are safe to merge.

4. Custom agents and extensibility

Modern platforms let engineering teams create custom agents that encode team-specific policies and workflows—whether enforcing style guides, running proprietary test suites, or applying internal compliance rules. This customizability is crucial for adoption across diverse engineering orgs.

Which problems does automated validation solve?

- CI overload: Reduces unnecessary CI runs by intelligently selecting and running relevant tests.

- Review backlog: Automates routine checks so reviewers see only what requires human judgement.

- Quality erosion: Catches common anti-patterns and flaky tests before they enter main branches.

- Security drift: Detects insecure patterns in generated code and blocks risky merges.

Can automated validation replace human reviewers?

Short answer: not entirely. Automated validation is designed to reduce human workload and to automate routine, deterministic checks. Humans remain essential for nuanced decisions—design trade-offs, ambiguous requirements, and judgment calls regarding business risk. The pragmatic model is hybrid: automated agents handle the bulk of validation, escalating exceptions to people for final approval.

When to rely on automation

- Routine formatting, linting, and unit-level correctness.

- Dependency and license checks.

- Reproducible functional tests and deterministic static analysis.

When to require human oversight

- Architectural changes that affect system behavior.

- Security-sensitive code paths and policy decisions.

- Business logic where specification ambiguity exists.

How to adopt automated code validation in your organization

Adopting validation agents requires both technical integration and organizational change. Below is a roadmap and recommended best practices to get started:

- Audit current pain points: Identify where CI spends the most time, which PRs trigger full builds, and what patterns cause repeated rework.

- Start small with targeted policies: Roll out validation agents for a single repository or service to validate the integration approach.

- Define guardrails and exception paths: Decide which checks are blocking and which only generate warnings.

- Measure impact: Track metrics such as CI run time, mean time to merge, post-release defects, and reviewer time saved.

- Iterate and expand: Scale agent coverage across repositories and teams, embedding custom agents for team-specific needs.

Recommended metrics to track

- CI runtime and cost per merge

- PR review time and number of iterations

- Frequency of CI failures attributable to generated code

- Post-release defect rate

What are the limitations and risks of automated validation?

Automated validation is powerful but not foolproof. Key challenges teams should monitor include:

- False positives and negatives: Overly aggressive checks can create noise; overly permissive checks miss issues.

- Overreliance: Blind trust in automated approvals can let risky code slip through if agents are misconfigured.

- Tooling complexity: Adding agents increases system complexity; maintainability of validation rules is vital.

- Security of the validation stack: Agents themselves must be secure—compromised agents could alter approvals or leak data.

How this fits into the agentic AI and developer tooling landscape

Agentic automation and “auto modes” for coding are reshaping developer workflows. Validation agents are the complementary counterpart to generation-focused tools: where generative models produce code, validation agents verify and orchestrate the lifecycle of that code. For background on the broader shift toward agentic developer tooling, see our coverage of Agentic Coding Automations: Streamlining Developer Workflows and the evolution of auto-mode developer tools in AI Auto Mode for Coding: Safer Autonomous Developer Tools.

Vibe coding platforms that accelerate generation are a key driver of the need for validation; for context on that trend, read our piece on Lovable and the Rise of the Vibe Coding Platform in 2026.

How vendors differentiate

Many vendors focus on generation—building models that write code. A smaller set is concentrating on validation: policy enforcement, test orchestration, end-to-end diagnostics, and enabling teams to create their own agents. Differentiators include:

- Policy expressiveness: How easily teams encode rules and enforcement levels.

- Observability: The depth of diagnostics and traceability from PR to production behavior.

- Scalability: Ability to run targeted pipelines to save compute and time.

- Extensibility: Support for custom agents that reflect company-specific constraints and workflows.

Deployment and cost considerations

Operationalizing validation agents often touches CI/CD cost dynamics. Intelligent validation reduces redundant CI runs, but adding sophisticated analysis may increase compute per-check. Best practice is to tier checks so lightweight, deterministic validations run first, and heavier analyses run only when necessary.

Consider these deployment patterns:

- Edge validation: Run basic checks in local pre-commit hooks or developer machines before PR creation.

- Pipeline gating: Use agents to determine minimal required CI tasks and run them on demand.

- Post-merge monitoring: Combine validation with observability so agents can detect runtime regressions after deployments.

What success looks like

Teams that adopt automated code validation successfully typically see measurable improvements within weeks to months:

- Reduced mean time to merge (fewer review cycles).

- Lower CI cost per merged change (fewer full builds).

- Decrease in post-release defects linked to generated code.

- Better developer satisfaction due to faster, clearer feedback.

How do I get started—practical checklist?

If you’re evaluating automated validation for your engineering org, use this starter checklist:

- Map your current CI pain points and the most common sources of CI failures.

- Enable a pilot: pick one repo and deploy a lightweight validation agent that runs lint, unit tests, and dependency checks.

- Define blocking vs. advisory checks and communicate them clearly to the team.

- Collect metrics for 60–90 days and compare against baseline CI times and defect rates.

- Iterate on rules, add custom agents for domain-specific validation, and scale across the org.

Conclusion

Automated code validation is not a threat to developer craftsmanship—it is an enabler. By deploying validation agents that orchestrate CI, run targeted analyses, and escalate only the ambiguous cases to humans, organizations can unlock the productivity benefits of AI-assisted development without sacrificing safety or quality. As agentic developer tooling continues to evolve, validation will become an indispensable part of modern software delivery pipelines.

If you’re building with AI-assisted code generation, validation agents provide the confidence layer that turns rapid output into reliable releases.

Take action

Ready to reduce CI churn and tame AI-generated code in your pipeline? Start with a focused pilot: choose a high-change repository, define a small set of blocking validations, and measure the impact within 60 days. Want curated resources and implementation guides? Subscribe to Artificial Intel News for in-depth analysis, case studies, and step-by-step playbooks to roll out automated code validation across your engineering org.