Google Ad Safety 2025: How Gemini Stopped 8.3B Ads

Google’s 2025 ad-safety figures mark a turning point in how platforms police advertising at scale. The company reported blocking a record 8.3 billion ads last year while suspending far fewer advertiser accounts than past enforcement patterns might predict. That divergence — more creative-level blocks, fewer account suspensions — reflects an AI-first approach to detection and response. This article unpacks the data, explains why suspensions dropped, explores how Gemini and generative AI shaped enforcement, and outlines practical steps for advertisers and regulators.

What did Google report in 2025?

According to Google’s public summary of ad safety activity, the headline metrics included:

- 8.3 billion ads blocked globally in 2025.

- 602 million ads and 4 million advertiser accounts associated with scams.

- Over 1.7 billion ads removed and 3.3 million advertiser accounts suspended in the U.S.

- In India, 483.7 million ads blocked — nearly double the prior year — while account suspensions fell to 1.7 million from 2.9 million.

These numbers show both scale and geographic variance. Common violations included ad network abuse, misrepresentation, trademark and copyright infringement, financial services violations, and sexual content. But the most important signal is the enforcement shift: many more individual creatives were stopped before reaching users, while account-level penalties declined.

Why did ad suspensions fall even as blocked ads surged?

Short answer: enforcement moved from blunt, account-level actions to granular, creative- and campaign-level interventions driven by automated detection. Google attributes the change to a layered, AI-driven enforcement strategy that focuses on stopping harmful content as early in the delivery pipeline as possible.

Key mechanics behind the decline in suspensions:

- Creative-level detection: Instead of suspending whole advertiser accounts, systems now block individual ads or creatives that break policy.

- Automated verification and defenses: Advertiser verification and other pre-flight checks prevent many bad actors from creating accounts in the first place.

- Reduced false positives: More precise detection reduces incorrect suspensions — the company reported an 80% year-over-year reduction in mistaken account suspensions.

The effect is twofold: users see fewer harmful or deceptive ads, while legitimate advertisers face less risk of losing access due to erroneous or overbroad enforcement.

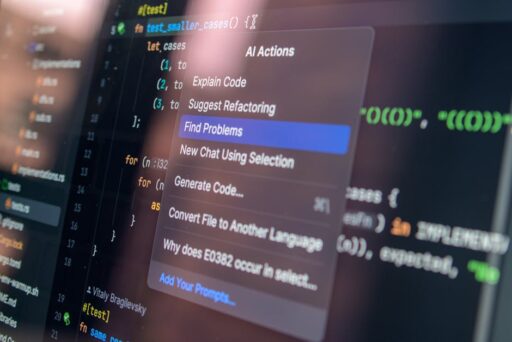

How did Gemini and generative AI change enforcement?

Google credits its family of Gemini models and broader AI infrastructure with enabling earlier and more precise ad blocks. These models analyze creative content, landing pages, and cross-campaign patterns to detect coordinated deception at scale. Generative AI, meanwhile, has empowered bad actors to produce large volumes of superficially convincing creatives; the same technology therefore fuels the need for large-scale automated detection.

Practical ways Gemini-like systems improve enforcement:

- Pattern detection across thousands of creatives to identify campaign-level scams.

- Semantic analysis of ad copy and images to flag misrepresentation or prohibited content.

- Real-time classification that stops ads before impressions are served to users.

This AI-driven pipeline shifts the enforcement burden earlier in the ad lifecycle, catching violations at the creative or campaign stage instead of waiting for repeated abuse that triggers account-level action.

Why generative AI both increases risk and enables defense

Generative models have lowered the cost of producing deceptive ad content, allowing malicious actors to scale campaigns across regions and languages. At the same time, the same family of models — when adapted for detection — excels at spotting stylistic fingerprints, repetitive phrasing, or artifact patterns that betray mass-produced scams. Systems trained on these signals can proactively block harmful creatives across networks.

For readers who want more on Gemini’s product expansion in different markets, including how it’s being used in India, see our coverage of Gemini Personal Intelligence Launches in India.

What does this enforcement shift mean for advertisers and publishers?

The transition to creative-level enforcement and AI-powered detection carries several implications for campaign managers, publishers, and platform policymakers:

- Faster creative review cycles: Ads are being evaluated automatically and earlier, so quality control must be frontloaded.

- Greater need for provenance: Advertiser verification and clear business identity reduce friction and risk of action.

- Campaign-level monitoring: Platforms are more likely to flag entire campaigns exhibiting coordinated patterns even if individual creatives appear marginal.

- New compliance workflows: Teams must embed policy checks in the creative production process to avoid reactive takedowns.

Advertisers should treat enforcement as part of campaign design rather than as an afterthought.

Actionable checklist for advertisers

- Enable and complete advertiser verification with platforms ahead of major campaigns.

- Run pre-launch creative scans for claims, trademarks, and financial language that commonly trigger enforcement.

- Use A/B testing and staged rollouts to detect automated flags early without risking full-campaign disruption.

- Monitor campaign signals for patterns consistent with generative-AI-produced creatives (repetition, boilerplate phrasing) and remediate quickly.

- Document provenance of assets and landing pages to speed appeals if an automated block occurs.

How should regulators and platforms balance transparency and automation?

AI-driven enforcement improves speed and scale, but it raises questions about transparency, explainability, and recourse. Platforms using large models to block content need clear audit trails and robust appeals processes to maintain trust. Policy-as-code approaches that codify rules into deterministic checks and layered machine learning signals can help reconcile automation with accountability; for deeper discussion of policy-as-code models in moderation, see our piece on AI Content Moderation: Policy-as-Code for Real-Time Safety.

Key policy considerations:

- Explainability: Platforms should provide meaningful explanations when creatives or accounts are blocked.

- Human-in-the-loop review: Escalation paths for contested enforcement decisions must be fast and accessible.

- Measurement and reporting: Regular, audit-quality transparency reports help independent researchers assess both efficacy and bias.

Will AI-driven enforcement keep pace with bad actors?

Short answer: it’s an ongoing arms race. As generative tools become cheaper and more capable, bad actors will refine evasion techniques. But defensive systems will also improve by leveraging cross-campaign signals, differential fingerprints, and adaptive models. We’ve already seen surges in bot-driven traffic and automated content production; for a deeper look at bot trends and web traffic risk, consult our analysis of AI Bot Traffic Surge.

Expect enforcement metrics to fluctuate as platforms roll out new safeguards and as adversaries respond. The objective for defenders is to make harmful campaigns uneconomical by increasing the speed and certainty of detection while preserving legitimate advertising flows.

Implications for users and publishers

Users benefit when scams and deceptive ads are stopped before impressions occur. Publishers benefit from cleaner ad inventories and fewer risky partnerships. But overly aggressive filtering can harm small advertisers and niche publishers if detection models lack nuance. Striking the right balance requires continuous measurement, transparent appeals, and investment in human review capacity.

What should industry stakeholders do next?

Platform teams, advertisers, and regulators should collaborate on a few priorities:

- Standardize advertiser verification and identity frameworks across major platforms.

- Invest in shared datasets and red-team exercises so detection models can learn from real-world adversarial techniques.

- Publish clear enforcement criteria and timely transparency reports to reduce speculation and increase trust.

- Adopt policy-as-code practices to make automated rules auditable and consistent.

Final takeaway

Google’s 2025 ad-safety numbers show that AI-first enforcement can scale detection to billions of creatives while reducing blunt account suspensions. That outcome improves protection for users and reduces collateral damage to legitimate advertisers — but it also raises fresh demands for transparency, robust appeals, and cross-industry collaboration. Advertisers should move quickly to harden creative and campaign workflows; regulators and platforms must invest in explainability and accountability so automation strengthens, rather than undermines, trust.

Ready to fortify your ad strategy?

If you run or manage digital advertising, start by auditing creative provenance, completing platform verifications, and building pre-launch policy checks into your workflow. Want help translating these recommendations into an operational plan? Contact our editorial team for an in-depth brief on adapting campaigns to the new AI-driven enforcement era.

Learn more about model-powered moderation, bot trends, and market implications from our related coverage listed above.