Rebellions Raises $400M to Accelerate AI Inference Chips and Infrastructure

South Korean fabless AI chip startup Rebellions has closed a fresh $400 million funding round as it prepares to scale production, expand internationally, and commercialize new infrastructure products designed specifically for inference. The round, led by major institutional investors, brings the company’s total capital raised to roughly $850 million and supports a roadmap focused on inference-optimized accelerators, integrated rack units, and production-ready compute pods.

Why this funding matters for AI inference

As large language models and other generative AI systems move from research demos into real-world applications, the infrastructure for inference—the process of running models to produce predictions or responses—has become a critical bottleneck. Inference workloads prioritize latency, power efficiency, and cost-per-query rather than the raw training throughput that dominated earlier hardware design. Rebellions’ funding is targeted at narrowing that gap: delivering silicon and systems that reduce operating costs while enabling models to run at scale.

Fabless model, focused design

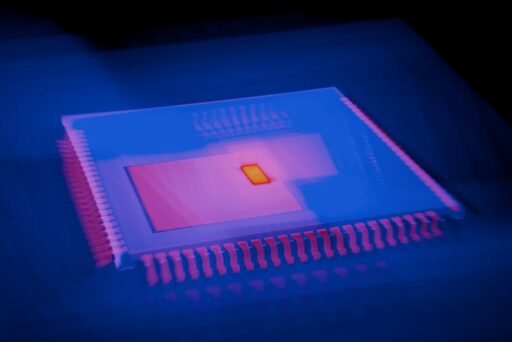

Founded in 2020, Rebellions follows a fabless semiconductor model: it designs custom accelerators and outsources fabrication to foundries. That approach allows the startup to iterate quickly on architecture and prioritize inference-specific design tradeoffs—such as quantization support, low-power tensor engines, and memory hierarchies optimized for KV cache and attention layers—without the capital intensity of owning a fabrication facility.

What makes Rebellions’ chips different?

Rebellions positions its products around three strengths:

- Inference optimization: chips tuned for low-latency, high-efficiency model execution rather than training throughput.

- Systems integration: hardware delivered as production-ready units that simplify deployment in datacenters and edge facilities.

- Global go-to-market: a plan to work with cloud providers, telecom operators, and regional hyperscalers to accelerate adoption.

The startup’s latest product introductions—RebelPOD and RebelRack—reflect that strategy. RebelPOD is designed as a production-ready unit of inference compute that can be deployed as a standalone appliance or inside a datacenter cage. RebelRack integrates multiple racks into a scalable cluster, offering a turnkey path to large-scale inference deployment with consistent management and resource orchestration.

How Rebellions plans to scale globally

Rebellions has signaled an aggressive international expansion. The company established entities in multiple regions and is building an ecosystem of technology partners to reach cloud providers, government agencies, telecom operators, and new-generation cloud providers. This multi-region approach aims to reduce latency for end users, meet local data sovereignty requirements, and facilitate partnerships with regional integrators and telco edge sites.

Marshall Choy, Rebellions’ Chief Business Officer, is leading global expansion and ecosystem development. The effort focuses on aligning hardware design with the operational needs of enterprise customers and service providers, including support for neoclouds, telecom edge deployments, and government-grade procurement.

Market context: Why inference-focused startups are emerging

Historically, a handful of vendors dominated AI accelerator design, emphasizing training-scale throughput. But as production AI has matured, inference requirements—lower power envelopes, predictable latency, and cost-effective utilization—have grown in importance. That shift has opened an opportunity for companies building inference-tailored silicon and systems to challenge incumbents by offering better economics for deployed models.

Industry players across hyperscalers and startups are exploring in-house and partner-built accelerators to balance performance, cost, and control. Rebellions’ product and go-to-market decisions are a direct response to these trends, offering customers an alternative optimized specifically for the economics of running models in production.

Economic and operational considerations

Sunghyun Park, co-founder and CEO of Rebellions, framed the shift succinctly: AI success is now measured by the ability to operate models at scale within power constraints while delivering clear economic returns. That reality pushes attention toward inference infrastructure and the software that ensures hardware is usable, efficient, and manageable across diverse environments.

Who will buy RebelPOD and RebelRack?

The target customers for Rebellions’ systems include:

- Cloud providers and neoclouds seeking specialized inference capacity.

- Telecom operators deploying AI at the network edge for low-latency services.

- Enterprises and government agencies requiring private inference clusters and data residency.

- AI service providers offering real-time conversational AI, recommendation engines, and personalized inference at scale.

By offering both a modular pod and an integrated rack solution, Rebellions can serve small- to large-scale deployments and provide a clear upgrade path as customers’ inference needs grow.

How will this affect AI infrastructure economics?

Inference-optimized chips and systems can materially lower the cost-per-query and power consumption for deployed models. That reduction directly impacts the viability of many commercial AI services, where inference is the recurring cost center. Rebellions’ solutions are pitched to reduce operational expenses and enable denser, more energy-efficient inference clusters—key drivers for customers wrestling with rising AI infrastructure spending.

For context on broader infrastructure trends and cost-saving strategies, see our coverage of Autonomous AI Infrastructure: Cut Cloud Costs by 80% and analysis on the multi-silicon inference cloud. Those pieces explore how software and hardware combine to unlock major savings in cloud and edge deployments.

What to watch next

Key signals to monitor as Rebellions moves forward:

- Performance-per-watt benchmarks and independent inference benchmarks against incumbent accelerators.

- Customer pilot announcements with cloud, telecom, or enterprise partners demonstrating real-world economics.

- Software stack maturity—runtime, model support (quantization, mixed precision), orchestration, and monitoring tools.

- Supply chain and manufacturing cadence tied to foundry partnerships and lead times.

- Pricing and commercial models that clarify total cost of ownership for enterprise buyers.

- Regulatory and procurement wins in regions that require localized infrastructure.

Can Rebellions challenge incumbent vendors?

Challenging established vendors in AI silicon requires a compelling combination of performance, software integration, and commercial strategy. Rebellions’ strengths are its focused inference architecture, packaged systems like RebelPOD and RebelRack, and a capital base that supports productization and international expansion. Success will depend on execution: proving workload-specific advantages, simplifying customer integration, and scaling manufacturing reliably.

Customers increasingly demand end-to-end solutions: hardware that arrives configured, software that integrates with existing orchestration layers, and predictable operating costs. Rebellions’ product framing suggests it understands these buyer needs and is investing accordingly.

Implications for enterprises and service providers

Enterprises evaluating inference infrastructure should consider the following when comparing vendors:

- Workload fit: Does the silicon prioritize the ops and precision modes your models require?

- Deployment model: Can the solution be deployed in your existing datacenter, colocation, or edge facility with minimal friction?

- Software ecosystem: Are runtime, orchestration, and monitoring tools mature and compatible with your stack?

- Service and support: Is there a clear roadmap for maintenance, security updates, and lifecycle management?

For enterprises weighing infrastructure choices and budget trade-offs, our coverage of broader AI infrastructure spending trends offers additional perspective: AI Infrastructure Spending: How the Cloud Race Is Scaling.

Final analysis

Rebellions’ $400 million raise and the introduction of RebelPOD and RebelRack mark a significant step for an inference-focused semiconductor startup. With nearly $850 million in total funding and a valuation in the growth-stage range, the company is well-positioned to push into global markets and compete on the economics of production inference.

Achieving durable market share will hinge on demonstrable efficiency gains, a robust software and management stack, and the ability to deliver consistent supply and support. If Rebellions can prove lower total cost of ownership for common inference workloads while easing operational complexity, the company could become a prominent alternative for customers prioritizing inference economics over raw training throughput.

Key takeaways

- Rebellions raised $400M to scale inference chip development and global expansion.

- New products RebelPOD and RebelRack aim to simplify production deployment and scale inference clusters.

- The market shift toward inference economics creates opportunities for silicon and systems optimized for low-latency, power-constrained execution.

- Execution on performance, software, and manufacturing will determine how successfully Rebellions competes with legacy and new entrants.

Want deeper analysis and updates?

Follow our coverage for detailed benchmarks, customer rollouts, and comparative analysis of inference accelerators. If you’re evaluating infrastructure options, sign up for updates and case studies that highlight total cost of ownership, deployment playbooks, and integration patterns for inference at scale.

Call to action: Subscribe to Artificial Intel News for first-look analysis on inference platforms, product benchmarks, and strategic guidance. Explore how Rebellions’ approach could impact your AI strategy and receive practical insights for deploying inference at scale.