AI Code Verification: Next Phase of Software Development

As AI tools produce billions of lines of code each month, organizations face a new, urgent challenge: making sure that generated code actually works, is safe, and aligns with business standards. Speed of generation no longer equates to trust. AI code verification — a discipline focused on testing, reasoning, and governing AI-generated changes across systems — is emerging as the essential layer for teams adopting automated coding assistants.

Why is verification becoming the new bottleneck?

Development teams are embracing AI to accelerate feature delivery, reduce repetitive work, and boost productivity. Yet many leaders now report a paradox: faster output often leads to more brittle integrations, hidden logic errors, and governance gaps. The core reasons verification matters today include:

- Context sensitivity: Generated code can be syntactically correct but inconsistent with project conventions, historical decisions, or architectural constraints.

- Cross-file and system-level bugs: Small edits in one file can produce cascading failures elsewhere; catching these requires whole-system analysis, not just line-by-line checks.

- Security and compliance risk: Automated code can introduce vulnerabilities or violate regulatory requirements if not reviewed with governance in mind.

- Developer trust and adoption: Many developers remain wary of committing AI-generated changes without rigorous verification, creating friction between speed and safety.

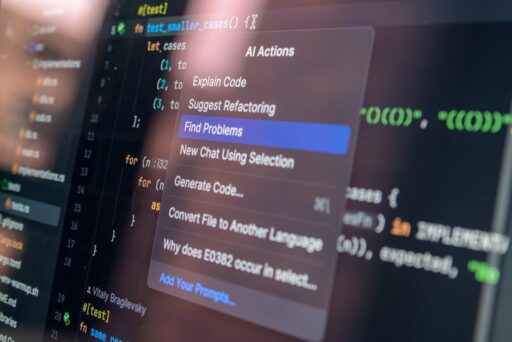

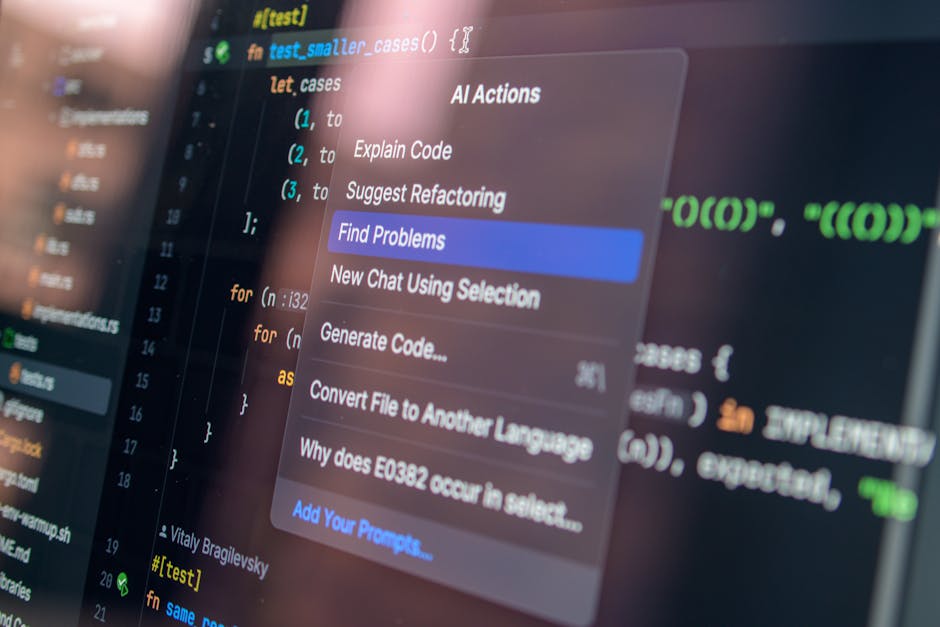

What is AI code verification and how does it work?

AI code verification combines automated reasoning, targeted testing, static analysis, and organization-specific policy enforcement to validate changes before they reach production. Unlike traditional linters or unit test suites, verification platforms are built to:

- Understand the system-level impact of changes across repositories and services.

- Ingest organizational standards, historical decisions, and risk tolerance to shape judgments about quality.

- Automate verification workflows that mirror human code review but scale to high-velocity AI-driven outputs.

Key components typically include pre-commit verification agents, multi-file reasoning engines, policy-as-code enforcement, and integrations with CI/CD pipelines to block risky changes automatically.

How is this different from ordinary code review?

Manual code review remains invaluable for architecture discussions and mentorship. AI code verification augments and automates the routine, repetitive, and systemic checks that human reviewers miss when velocity increases. Verification platforms prioritize system-level correctness, reproducible validation, and organizational context over purely syntactic suggestions.

Why aren’t large language models (LLMs) enough?

LLMs are powerful at generating code and suggesting fixes, but they were not designed to act as a source of truth for an organization’s past decisions, proprietary constraints, or nuanced risk appetite. Consider:

- Quality is subjective and shaped by internal standards and legacy trade-offs.

- LLMs do not have built-in memory of an organization’s tribal knowledge or design rationales.

- Verification requires deterministic checks, reproducible tests, and a way to encode policies — tasks that need dedicated systems and tooling.

For these reasons, modern verification platforms combine model-driven reasoning with rule-based analysis, historical telemetry, and human-in-the-loop feedback.

How do next-generation verification platforms differ?

Leading verification solutions are designed around three practical goals: reduce developer noise, scale system-level reasoning, and embed governance directly into the developer workflow. Typical differentiators include:

- Multi-agent review: Multiple specialized agents simulate reviewers that focus on logic, security, performance, and policy.

- Organizational learning: Tools that learn a team’s definition of quality by analyzing past commits, accepted reviews, and production incidents.

- Risk-aware checks: Prioritization that surfaces critical cross-file bugs and logic faults rather than low-impact stylistic issues.

- CI/CD integration: Verification gates that can automatically block merges, raise tickets, or run targeted end-to-end tests.

These capabilities help teams move from reactive debugging to proactive assurance.

Who’s adopting verification now?

Enterprises with large, complex codebases and strict reliability requirements are early adopters. Modern verification platforms are already in use at hardware companies, major retailers, open-source infrastructure firms, and fast-growing SaaS vendors that must balance velocity with safety. Examples include organizations in semiconductor, retail, cloud infrastructure, and enterprise software.

For teams exploring how agentic workflows reshape developer operations, our coverage of Agentic Coding Automations offers practical context. Readers interested in new tools for developer review should also see AI Code Review for Developers, and teams experimenting with safer autonomous coding modes will find insights in AI Auto Mode for Coding.

How can teams integrate verification into existing workflows?

Adopting verification should be incremental and aligned with developer habits. Here’s a practical rollout plan:

- Start with high-risk areas: Identify services handling sensitive data or critical workflows and enable verification gates there first.

- Integrate with pull requests: Run verification agents on PRs to provide actionable feedback before merges.

- Automate tests and policy checks: Convert regulatory and security requirements into policy-as-code to enforce automatically.

- Measure and iterate: Track false positives, developer friction, and time-to-merge to fine-tune thresholds and agent behavior.

- Scale gradually: Expand coverage as confidence grows, using organizational learning to reduce noise over time.

These steps help teams integrate verification without stalling delivery velocity.

Tools and integrations to prioritize

- CI/CD pipelines (pre-merge gates, test runners)

- Issue trackers (auto-filing of verified failures)

- Secrets and policy managers (to enforce compliance)

- Observability and telemetry (linking verification outcomes to incidents)

What metrics should engineering leaders track?

Quantifying the impact of verification is essential for continued adoption. Useful metrics include:

- Defect escape rate: Bugs found in production per release after verification adoption.

- False positive rate: Percentage of verification alerts that developers mark as irrelevant.

- Review time delta: Change in average pull request review time with verification enabled.

- Policy coverage: Percentage of repositories with automated governance checks.

- Developer trust score: Qualitative feedback on whether teams feel safer adopting AI-generated code.

Tracking these indicators helps balance safety, speed, and developer experience.

What organizational changes support verification success?

Verification is as much a cultural shift as a technical one. To succeed, organizations should:

- Codify quality standards and treat them as living artifacts.

- Encourage cross-functional ownership between SRE, security, and product engineering.

- Provide clear feedback loops so developer input refines verification policies.

- Invest in training so teams understand verification signals and how to respond.

What are common pitfalls and how to avoid them?

Adopting verification can falter if teams rush or treat it as a checkbox. Common pitfalls include:

- Overzealous blocking: Excessive strictness creates developer frustration. Start with advisory mode before enforcement.

- Poorly tuned agents: High false-positive rates reduce trust. Use organizational learning to refine agents.

- Siloed ownership: Verification should not live exclusively with security — make it a cross-team initiative.

Addressing these issues early helps verification become a productivity multiplier rather than a bottleneck.

How will verification evolve over the next 2–3 years?

Expect verification to move from advisory tooling to an integral control plane for software delivery. Future trends include:

- Tighter integration of verification signals into incident management and observability.

- Policy marketplaces and standard libraries for industry-specific compliance checks.

- Smarter organizational learning that reduces noise and adapts to evolving codebases.

- Stateful verification systems that maintain institutional context across changes.

These advancements will make AI-driven development both faster and more trustworthy.

Conclusion — Why teams should prioritize AI code verification now

AI has fundamentally changed how code is produced. To realize the productivity gains without amplifying risk, teams must pair generation with robust verification. Verification platforms bring system-level reasoning, policy enforcement, and organizational memory to the developer workflow, enabling enterprises to scale AI-assisted development with confidence.

Ready to reduce production defects, tighten governance, and accelerate safe delivery? Start by piloting verification on a high-risk service, integrate it with your CI/CD pipeline, and use metrics to iterate quickly. For practical guidance on agentic workflows and developer automation, explore our in-depth pieces on Agentic Coding Automations and AI Auto Mode for Coding.

Call to action: If your team is experimenting with AI-generated code, prioritize a verification pilot this quarter. Measure defect escapes and developer trust, iterate policies from real data, and share results across engineering. Start the pilot today and turn AI speed into lasting software reliability.