Dreamina Seedance 2.0: New AI Video Generation Model

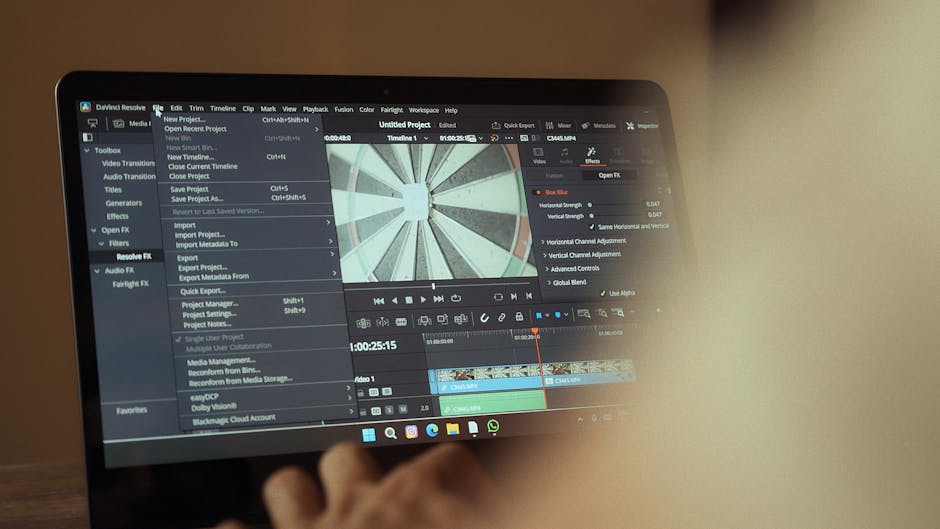

ByteDance has begun rolling out Dreamina Seedance 2.0, its latest AI video generation model, inside the CapCut editing platform and related creative products. Built to let creators draft, edit, and sync short audio-visual clips from prompts, images, or reference footage, Seedance 2.0 represents the next wave of AI-powered creative tooling: faster ideation, assisted editing, and on-platform safety measures aimed at reducing misuse.

Why Dreamina Seedance 2.0 matters for creators and brands

The arrival of this AI video generation model signals a change in how creators approach pre-production and editing. Historically, generative video models struggled with motion fidelity, lighting consistency, and producing realistic textures across multiple camera angles. Seedance 2.0 highlights advancements in those areas and focuses on short-form, production-ready results that integrate with common creator workflows.

Key practical benefits include:

- Rapid prototyping: Generate early concept clips from a few descriptive words or rough sketches to test tone and pacing before filming.

- Augmented editing: Use AI to enhance or correct existing footage—improving lighting, adding realistic motion elements, or fixing continuity.

- Cross-format support: Seedance 2.0 supports multiple aspect ratios, enabling creators to output content for social platforms with fewer manual adjustments.

What can Dreamina Seedance 2.0 do for creators?

Seedance 2.0 is designed for short clips (up to 15 seconds at launch) and supports six aspect ratios, making it useful for platform-native social content. The model produces footage from prompts alone—no reference images required—while also accepting images or short reference videos to guide style and composition. ByteDance emphasizes realistic rendering of textures, motion, and lighting across different viewing angles, which helps with both fully generated scenes and AI-assisted edits of user footage.

Typical use cases

- Concept validation: Test camera moves, framing, and choreography before committing resources to a full shoot.

- How-to and tutorial content: Generate concise visual sequences for cooking, fitness, or tech demos when live-action capture is costly or slow.

- Product overviews: Create stylized product shots and motion sequences for ads or catalog clips.

- Action and motion tests: Produce short action-focused scenes that previously challenged earlier video generation models.

Where the model is available and how it’s rolling out

The rollout begins within CapCut for selected markets, including Brazil, Indonesia, Malaysia, Mexico, the Philippines, Thailand, and Vietnam, and will expand over time. In China, ByteDance has made the model available via its Jianying app. Within CapCut, Seedance 2.0 integrates into areas such as the AI Video editing panel and the Video Studio generation tools. ByteDance also plans to enable the model on its broader AI generation platform and marketing tools, positioning it both as a creator feature and a marketing asset.

Why the phased approach?

Phased deployments let ByteDance tune safety policies, moderative workflows, and market-specific controls before a global launch. Video generation has immediate implications for privacy, likeness rights, and copyright, so a careful rollout helps iterate policies and technical mitigations in real-world conditions.

How Seedance 2.0 addresses safety and copyright

Generative video models raise a range of legal and ethical questions. To reduce abuse and protect rights holders, Seedance 2.0 includes several guardrails:

- Face restrictions: The model is restricted from generating videos from images or footage containing real, identifiable faces, reducing the risk of deepfake-style misuse.

- IP protections: CapCut will block attempts to generate content that imitates copyrighted characters or media without authorization.

- Invisible watermarking: Outputs from the model embed an imperceptible watermark, enabling platforms and rights holders to detect content produced by the system when shared off-platform.

These controls are not perfect, and they will evolve. ByteDance plans to work with experts and creative communities to refine restrictions, improve detection, and respond to reported issues. For creators and brands, this means a balance between creative power and platform-enforced responsibility.

What limitations creators should expect

Despite improvements, current generative video systems are still constrained in several ways:

- Short duration: At launch, the model is limited to clips of roughly 15 seconds, which constrains longer-form storytelling.

- Context and continuity: Maintaining frame-to-frame continuity over longer sequences remains a technical challenge.

- Stylistic bounds: While Seedance 2.0 improves realism, extreme photorealism in complex, multi-subject scenes can still reveal artifacts.

Creators should use the model as a complement to, not a replacement for, traditional production—especially for projects that require high fidelity over long durations.

How platforms will enforce rights and moderation

Invisible watermarks and automated IP checks are two layers of enforcement, but effective moderation will combine automated detection with human review and legal mechanisms. Platforms must build clear reporting channels so rights holders can flag unauthorized outputs, and they should provide transparent remediation pathways for disputed content. These systems will likely intersect with broader policy conversations around creator compensation and platform responsibility.

For background on creator rights and compensation debates influenced by generative AI, see our coverage of AI Creator Compensation: Why Platforms Must Pay Creators. For safety and legal lessons from prior high-profile cases, review AI Chatbot Safety: What the Gemini Lawsuit Teaches, which examines how litigation has shaped platform safeguards.

Technical and infrastructure implications

Generative video workloads are compute-intensive. While Seedance 2.0 targets short clips to limit resource use, broader adoption will strain inference infrastructure. This underscores the ongoing trade-offs platforms face between model capability, latency, and cost. Developers and platform engineers will need to optimize model serving, caching, and multi-device strategies—areas we explored in our reporting on On-Device AI Models: Edge AI for Private, Low-Cost Compute, which outlines approaches to decentralize compute and preserve user privacy.

How creators should adopt Seedance 2.0 responsibly

Best practices for early adopters:

- Label AI-generated content clearly when publishing, even with watermarking enabled.

- Secure permissions and licenses before generating content that references copyrighted works, trademarks, or distinctive characters.

- Use the tool for ideation and pre-visualization, then transition to filmed production for final assets when necessary.

- Monitor outputs for artifacts or inaccuracies, especially in instructional or safety-sensitive content.

Checklist for creators

- Confirm platform restrictions and allowed use cases before generating content.

- Keep source files and edit logs to demonstrate provenance if questions arise.

- Engage with the community: share feedback with platform teams to improve safety and quality.

What this means for the broader generative AI landscape

The integration of an AI video generation model directly into a mainstream editing app marks a maturing of generative video technology. As models improve and safety tooling becomes more robust, we should expect to see a spectrum of uses—from fast-turn marketing clips to experimental art. At the same time, regulatory scrutiny and creator demands for compensation and transparency will shape platform behavior and product roadmaps.

Industry questions to watch

- How will platforms balance rapid innovation with proactive moderation and rights enforcement?

- Will invisible watermarking and metadata standards become industry norms for identifying synthetic media?

- How will creator compensation models adapt to AI systems that can produce derivative or transformative works?

Conclusion: A pragmatic step forward for creators

Dreamina Seedance 2.0 brings notable progress to AI-powered video generation with a pragmatic, safety-conscious launch strategy. It empowers creators to prototype faster and augment their editing workflows while embedding guardrails to reduce misuse. As the model expands to additional markets and ByteDance iterates on safety and quality, creators, platforms, and rights holders will need to collaborate closely to realize the potential of AI video tools while managing legal and ethical risks.

Ready to experiment with AI video?

Try Seedance 2.0 for rapid ideation, but follow the checklist above and respect platform rules. For continued coverage of generative AI trends, safety, and creator economics, subscribe to Artificial Intel News and explore related reporting on creator rights and platform governance.

Call to action: Subscribe to Artificial Intel News for in-depth updates on AI tools, safety policies, and creator-first strategies—stay ahead of how generative video will reshape content production.