AI Auto Mode for Coding: Safer Autonomous Developer Tools

As AI systems take on more of the repetitive and contextual tasks in software development, developer teams face a recurring trade-off: maximize speed with autonomous execution, or retain strict human oversight at the cost of friction. AI auto mode for coding is an emerging pattern that aims to thread this needle by letting models decide which actions are safe to take on their own and which require human approval. When implemented well, auto mode boosts productivity without exposing teams to catastrophic mistakes.

What is AI auto mode for coding and how does it work?

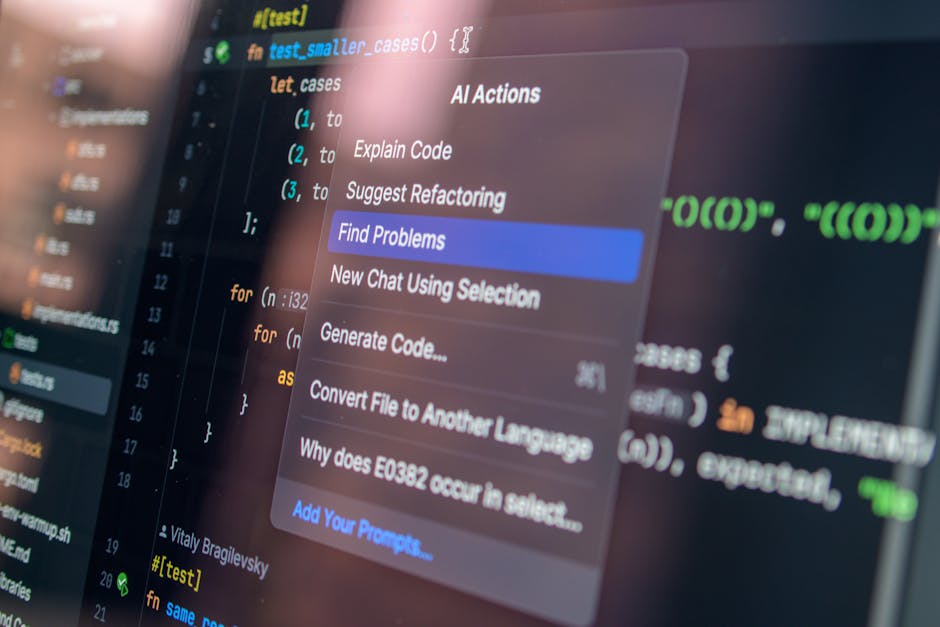

AI auto mode for coding is a configuration for developer-facing AI that enables the model to execute certain actions autonomously. Rather than prompting the model for each permission, auto mode uses an internal safety review process to decide whether an action should proceed automatically, be blocked, or be flagged for human confirmation.

Core components typically include:

- Action orchestration: the ability for the model to sequence operations (e.g., run tests, create branches, commit changes).

- Safety review layer: an automated check that evaluates each action for policy violations, security risks, or prompt injection attempts.

- Isolation controls: sandboxed or staging environments that limit side effects when actions are executed automatically.

Auto mode is not absolute autonomy. It is a calibrated delegation: routine, low-risk tasks proceed automatically, while high-risk actions are intercepted and escalated.

Why does balancing speed and control matter?

Developer workflows thrive on iteration speed. Small automation gains compound: faster branch creation, quicker test runs, instant formatting and lint fixes, and automated dependency updates all reduce cognitive load. But misplaced trust in an autonomous system can introduce serious problems:

Potential risks of unchecked autonomy

- Unintended production changes: an automated merge or deployment triggered by a misinterpreted prompt can break live systems.

- Security exposures: leaked secrets, unsafe network calls, or injection of malicious code paths.

- Prompt injection and adversarial content: hidden or specially crafted inputs that cause the model to perform actions outside user intent.

- Compliance and auditability gaps: when actions are taken without clear human approvals, tracing decisions for audits becomes harder.

Benefits of carefully designed auto mode

When combined with strong safeguards, AI auto mode for coding can:

- Reduce manual approvals for repetitive tasks.

- Speed up CI loops by running or fixing tests automatically.

- Lower cognitive load and context switching for engineers.

- Enable more powerful agentic workflows that compose multiple services securely.

How does an auto mode enforce safety?

A practical safety architecture for autonomous execution usually has several overlapping defenses:

1. Action-level policy checks

Before an action runs, the safety layer verifies it against predefined rules: does this action touch production resources, modify access controls, or handle secrets? Actions that intersect with sensitive resources are either blocked or require explicit human consent.

2. Semantic analysis for prompt injection

Prompt injection occurs when malicious or unexpected content in input (for example, a code file or an online resource) appears to the model as instructions. The safety layer performs semantic checks to detect unusual command patterns or hidden directives and flags them for review.

3. Behavioral heuristics and anomaly detection

Systems monitor for deviations from normal behavior—unexpected file system writes, unusual outbound network calls, or a sudden increase in privileged actions. Anomalies trigger halts or human alerts.

4. Sandboxing and ephemeral credentials

Running actions in isolated environments with short-lived credentials reduces blast radius if something goes wrong. For example, the system should prefer staging deployments and ephemeral test runners rather than direct production changes.

How should teams adopt AI auto mode for coding?

Rolling out auto mode safely requires both technical controls and organizational changes. Below are recommended steps to adopt autonomous developer features responsibly.

Best practices (recommended)

- Start in read-only or non-production environments. Test the model’s decision-making without permitting side effects.

- Define clear action taxonomies. Classify actions by risk level (e.g., low: code formatting; medium: merge PRs; high: change infra configs).

- Use incremental delegation. Begin by letting the model auto-approve low-risk tasks, then gradually expand scope as confidence grows.

- Require human confirmation for high-risk operations and privileged resources.

- Maintain auditable logs of every action, the safety checks that ran, and why each action was allowed or blocked.

- Integrate secrets management and avoid storing credentials in places that AI agents can access directly.

- Run periodic red-team and adversarial testing focused on prompt injection and malicious inputs.

Implementation checklist for engineering teams

- Map out all automated touchpoints in your CI/CD and identify production-sensitive actions.

- Configure sandbox environments and ephemeral credentials for early testing.

- Deploy monitoring and alerting that tracks agent decisions and unusual activity.

- Set up role-based policies that limit which agents or models can act on which resources.

- Define an escalation and rollback plan for when an autonomous action causes regression.

What kinds of tasks are safe to let AI execute autonomously?

High-value targets for safe automation are routine, reversible, and easy to validate. Examples include:

- Automated code formatting, linting, and style fixes that follow a repo’s rules.

- Running tests and reporting results or auto-creating issue tickets for failing flakes.

- Generating draft pull requests with proposed changes for human review before merge.

- Dependency checks and suggestions for non-critical upgrades, with approval required to actually merge.

Tasks that touch production data, billing, or access control should remain under human approval until proven safe in long-term audits.

How does this fit with agentic and autonomous tooling trends?

AI auto mode for coding is part of a broader movement toward agentic systems that can perform multi-step tasks with minimal human orchestration. Teams exploring agentic workflows should read and compare related best practices, such as examples that show how agentic coding automations streamline developer workflows and the operational patterns used in agent orchestration.

Relevant internal reads include:

- Agentic Coding Automations: Streamlining Developer Workflows — practical patterns for composing multi-step developer tasks safely.

- AI Agent Workflows: Inside Garry Tan’s gstack Setup — operational details of agent orchestration and sandboxing strategies.

- Secure AI Agents: NanoClaw’s Open-Source Breakthrough — examples of safety-first design for autonomous agents.

How should product teams design an effective safety layer?

Product teams should treat safety as a product requirement equivalent to performance or UX. Important design principles include:

- Transparency: clearly surface why the model allowed or blocked an action to engineers and auditors.

- Configurability: let teams tune which action categories are auto-allowed and which require approvals.

- Explainability: provide human-readable rationales for automated decisions so maintainers can quickly assess correctness.

- Fail-safe defaults: when in doubt, block or require human confirmation rather than proceed.

What questions should security and legal teams ask?

- Does the auto mode log every decision and provide tamper-evident audit trails?

- Are there controls preventing exposure of secrets or PII through automated actions?

- How does the system detect and mitigate prompt injection or adversarial content?

- What rollback and incident response processes are in place for erroneous autonomous actions?

Looking ahead: the future of autonomous developer tools

Autonomous coding capabilities are likely to accelerate. We can expect improvements in model understanding of developer intent, more sophisticated safety policies, and richer integrations across CI/CD and observability tooling. Two parallel trends will determine adoption speed:

- Advances in model evaluation and adversarial robustness that reduce false positives and false negatives in safety checks.

- Org-level maturity in processes for auditing, governance, and incident response around agentic actions.

When these trends align, teams will be able to delegate a larger portion of the development lifecycle to AI while preserving reliability and compliance.

Conclusion

AI auto mode for coding promises significant developer productivity gains, but only when implemented with layered safety, sandboxing, and clear governance. Start small, favor reversible actions, invest in monitoring and audit trails, and incrementally expand autonomy as confidence grows. With the right controls, teams can harness autonomous coding to reduce toil while protecting systems and data.

Next steps and call to action

Ready to explore AI auto mode for coding in your organization? Begin with an isolated pilot: run auto mode in sandboxed repositories, log every decision, and iterate on your safety policies. If you want practical guidance on designing agentic workflows or hardening safety checks, read our deep dive on agentic coding automations and review operational patterns in AI agent workflows. Implement a pilot this quarter, collect metrics on time saved and incidents avoided, and share the results with your engineering and security stakeholders.

Take action: Start a sandbox pilot this week and document the first 30 days — then iterate. Want a checklist template and rollout playbook? Subscribe to our newsletter for templates, case studies, and updates on safe agentic development.