Secure AI Agents: NanoClaw’s Open-Source Breakthrough

In the rapidly evolving landscape of agentic AI, security and trust are no longer optional. NanoClaw — a compact, open-source project born from a weekend of intensive development — has emerged as a practical blueprint for building secure AI agents that prioritize minimal attack surface, auditable code, and container-based isolation. This post analyzes the technical choices behind NanoClaw, the community response that accelerated its growth, and the commercial pathways being explored by its creators.

Why secure AI agents matter

AI agents are increasingly used to automate tasks across marketing, product operations, customer support, and engineering workflows. Their capabilities to access accounts, read messages, and interact with external systems make them powerful productivity tools — and dangerous privacy liabilities when design choices are permissive or opaque.

Key risks include:

- Excessive data access: agents may store or exfiltrate private messages and credentials.

- Large, unvetted codebases: heavy dependency trees make supply-chain validation difficult.

- Lack of isolation: once installed, agents can access more of a host system than intended.

Addressing these issues requires a combination of secure defaults, transparent code, and technical isolation mechanisms such as containerized sandboxes.

How did NanoClaw approach security and simplicity?

NanoClaw was created with three core principles in mind:

- Minimalism: the project aims for the smallest possible codebase to make auditing feasible.

- Isolation: agents run in restricted environments that explicitly grant only necessary permissions.

- Openness: the project remains fully open-source so a community can inspect and contribute.

By deliberately limiting the number of lines of code and the number of bundled packages, the developers reduced the surface area for bugs and supply-chain vulnerabilities. That choice also speeds code review and makes third-party validation realistic for teams that need compliance assurances.

What makes an AI agent secure?

Short answer: clear permission boundaries, auditable code, and robust runtime isolation.

Permissions and least privilege

A secure agent requests only the data and accounts required for a single task. This prevents broad access to a user’s entire message history or storage. Enforcing least privilege avoids accidental leakage of unrelated personal data.

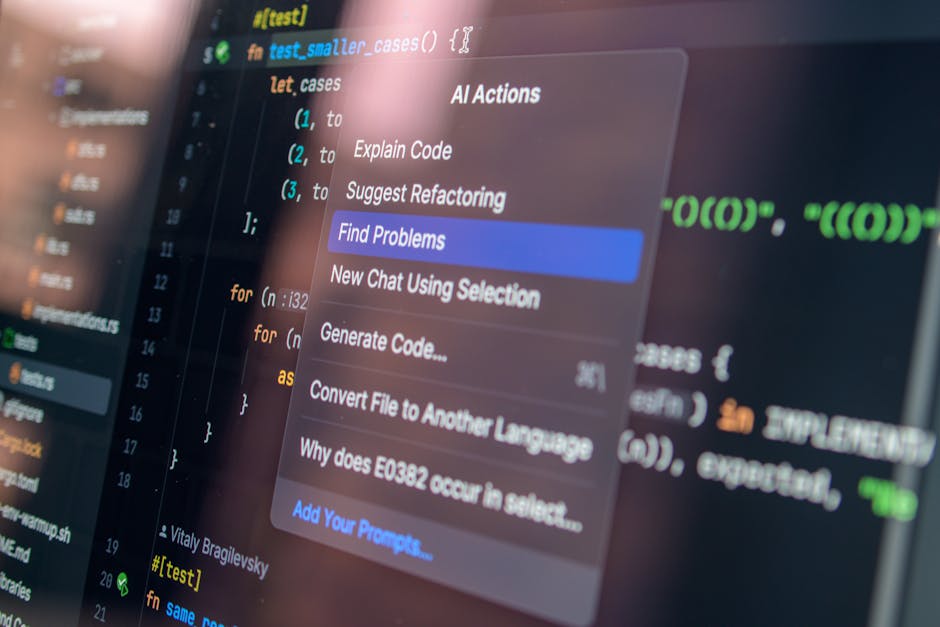

Auditable, compact code

Large monolithic projects with thousands of dependencies are difficult to vet. A compact project — intentionally limited to essential packages — enables security teams and open-source contributors to trace behavior and identify suspicious components quickly.

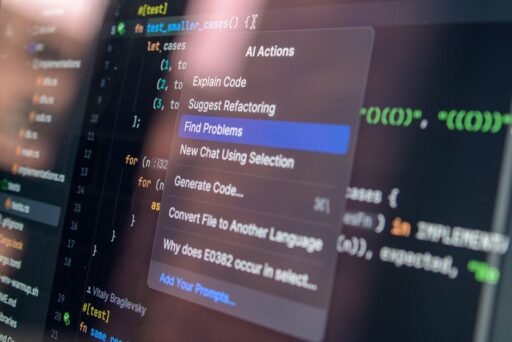

Containerized sandboxes and runtime isolation

Runtime isolation confines an agent to a controlled environment so that even if the agent is compromised, the attacker cannot roam the host system. Container-based sandboxes can be configured to limit network access, filesystem visibility, and runtime capabilities. This approach combines operational flexibility with stronger safety guarantees than running code directly on a developer’s workstation.

Technical choices: container sandboxes and standard platforms

One practical lesson from NanoClaw’s evolution is the value of adopting industry-standard container technologies rather than proprietary or platform-specific tooling. When a project attracts a community, relying on widely supported container platforms helps ensure broader compatibility and easier maintenance by contributors across environments.

Benefits of standard container platforms

- Broader developer familiarity and community support.

- Better tooling for orchestration, monitoring, and security scanning.

- Easier transition from experimental local setups to enterprise deployments.

Community momentum and responsible stewardship

Open-source projects that solve pressing problems often attract rapid attention. That momentum can be an accelerator but also a responsibility: maintainers must balance accessibility with careful governance. NanoClaw’s maintainers emphasized keeping the project small and auditable while welcoming contributions and integration patches from the broader developer base.

When a community expands quickly, practical steps to maintain safety include:

- Clear contribution guidelines and security reporting channels.

- Automated tooling for dependency scanning and continuous integration checks.

- Documentation that explains permission models and safe deployment practices.

How can teams adopt secure, lightweight agents?

For organizations evaluating agentic AI, the following roadmap helps integrate secure agents into production safely.

- Start with a minimal open-source agent and audit its dependencies.

- Run the agent inside a containerized sandbox with explicit mounts and network policies.

- Limit agent scopes to task-specific credentials and rotate keys frequently.

- Implement monitoring and alerting for unusual agent activity.

- Establish human-in-the-loop checkpoints for sensitive tasks.

How does this relate to enterprise adoption and scaling?

Companies looking to adopt agentic AI will want both turnkey solutions and advisory services. One commercial path is to offer a supported distribution of an open-source agent with enterprise features: hardened runtime configurations, deployment templates, and managed services that embed forward-deployed specialists to help integrate agents into production workflows.

For background on how enterprise AI agents are emerging as a startup opportunity and the operational challenges they present, see our analysis of Enterprise AI Agents: The Next Big Startup Opportunity and the practical considerations in Scaling Agentic AI: Intelligence, Latency, and Cost.

What are the common objections and limitations?

There are several legitimate challenges organizations should plan for:

- Feature trade-offs: minimal agents may lack convenience features found in larger projects.

- Support burden: open-source projects need operational support and SLAs before enterprise adoption.

- Competition: the market for secure agent tooling is crowded and evolving rapidly.

Mitigation strategies

To address these issues, teams can combine a small core agent with modular extensions, operate a paid support tier, and maintain a rigorous roadmap that prioritizes security and interoperability.

Case study: transitioning from prototype to company

When a prototype gains rapid adoption, its creators face decisions about governance and monetization. In many successful open-source transitions, the maintainers preserve a free core project while offering paid enterprise features or professional services. Those services typically include:

- Managed deployments and maintenance.

- Professional integration and training.

- Custom security hardening and audits.

This model supports community trust while creating sustainable revenue to fund long-term development and security updates. For more on commercializing agentic AI responsibly, read our piece on AI Agent Security: Risks, Protections & Best Practices.

Practical checklist for teams evaluating open-source agents

Use this short checklist when vetting any open-source agent project:

- Audit dependency tree and license compatibility.

- Confirm presence of attack-surface reduction strategies (e.g., minimal codebase, least privilege).

- Run the agent in an isolated sandbox with observability hooks.

- Review contribution and security response policies.

- Plan for commercial support or internal resource allocation if adopting at scale.

Looking ahead: the future of secure, lightweight agents

Secure agents that combine minimal, auditable code with robust container isolation will be an attractive foundation for many enterprise workflows. The most successful projects will balance openness, developer ergonomics, and enterprise-grade operational tools. As communities and ecosystem partners contribute integrations and runtime improvements, expect to see a spectrum of offerings from hobbyist toolkits to production-ready managed services.

Final thoughts and next steps

NanoClaw’s rapid rise illustrates a few core truths about agentic AI: developers value simplicity, security matters to users, and community attention can accelerate both innovation and responsibility. Organizations planning to adopt AI agents should prioritize auditable design, containerized isolation, and clear governance to mitigate risk and unlock productivity gains.

Want to explore secure agent adoption?

If you’re evaluating agentic AI for your team, start by auditing candidate projects against the checklist above, test them inside sandboxed environments, and consider partnering with experts to harden deployments. For further reading on enterprise agent opportunities and scaling considerations, see Enterprise AI Agents: The Next Big Startup Opportunity and Scaling Agentic AI: Intelligence, Latency, and Cost.

Call to action: Subscribe to Artificial Intel News for deeper analysis, technical guides, and security best practices on deploying secure AI agents — or contact our team to discuss a pilot that puts safe, auditable agent workflows to work in your organization.