AI Code Review for Developers: Anthropic’s New Tool

The growing adoption of AI-assisted development — sometimes called “vibe coding” — has radically accelerated code generation, but it has also introduced new classes of bugs, security gaps, and review bottlenecks. Anthropic’s AI code review product is designed to address that exact pain point: automated, prioritized analysis of pull requests to catch logic errors and surface the highest-risk issues before code merges into production.

What is Anthropic’s Code Review?

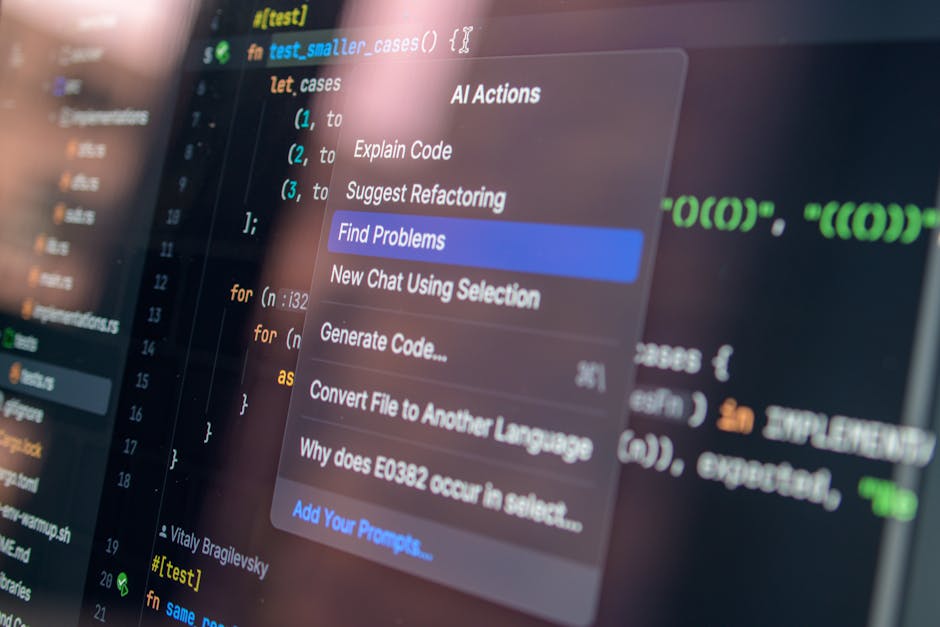

Anthropic’s Code Review is an enterprise-focused, AI-powered service that analyzes pull requests, leaves inline comments, and explains reasoning about potential bugs and vulnerabilities. It emphasizes logical correctness over stylistic feedback, with a multi-agent architecture that examines code from multiple angles and then aggregates findings into a ranked list for engineering teams.

How does AI code review catch bugs and speed engineering?

This question is a common one among engineering leaders and is central to understanding the product’s value. Anthropic’s approach combines automated static reasoning, cross-file context analysis, and prioritized triage so that developers and reviewers spend less time on low-value comments and more time fixing the most impactful issues.

Key components of how the system reduces defects and accelerates delivery include:

- Automated pull-request inspection: The AI scans diffs and related files to detect logic errors, incorrect assumptions, and potential regressions.

- Severity labeling: Issues are labeled and color-coded by severity so teams can triage efficiently.

- Actionable explanations: Each finding includes a step-by-step rationale and suggested fixes, reducing back-and-forth between authors and reviewers.

- Parallel multi-agent analysis: Multiple agents evaluate the same change from different perspectives (security, correctness, dependency impact) and a final agent consolidates results and removes duplicates.

Key features and what they mean for teams

1. Git-based integration

The tool integrates with common code hosting platforms to automatically analyze pull requests and post comments inline, minimizing workflow friction for engineers and reviewers.

2. Logic-first feedback

Rather than focusing on formatting or style, the system prioritizes logical errors and high-impact bugs. That makes the feedback immediately actionable and reduces reviewer fatigue.

3. Multi-dimensional severity and context

Findings are labeled (for example: high, medium, low) and annotated with context about why the issue matters. This helps engineering leads prioritize fixes that reduce risk to customers and production systems.

4. Custom checks and enterprise policies

Engineering leads can enable default review behavior across teams and add organization-specific checks tied to internal best practices and security baselines.

How the multi-agent architecture works

Anthropic’s product uses several agents operating in parallel, each specializing in a different analysis dimension — logical correctness, security heuristics, dependency impact, and historical bug patterns. A final aggregator agent deduplicates and ranks findings to produce a concise, prioritized report. This architecture balances breadth (many perspectives) with clarity (one consolidated output).

Why multi-agent analysis matters

Single-pass analyses can miss cross-file interactions or historical bug patterns embedded in the repository. Multiple agents provide diverse viewpoints and help capture issues that would otherwise require deep manual review, such as race conditions, incorrect assumptions about inputs, or edge-case error handling.

Benefits for enterprise engineering teams

Enterprises using large-scale AI-assisted coding face two related challenges: a surge in pull requests generated by automated tools and a shortage of reviewer capacity. AI code review offers several enterprise benefits:

- Faster shipping: By automating initial triage and fixing high-priority bugs sooner, teams can shorten the overall review-to-merge cycle.

- Higher code quality: Logic-focused checks help prevent classes of defects that cause outages or data issues.

- Consistent enforcement: Default and customizable checks ensure that organizational best practices are applied uniformly across teams.

- Reduced reviewer overload: Automated comments and severity ranking reduce noise and allow human reviewers to focus on design and architecture.

- Actionable developer learning: Step-by-step explanations accelerate developer understanding and help teams avoid repeat mistakes.

Integration, pricing, and performance considerations

The service integrates with popular code hosting platforms and can be enabled by engineering leads as a default review runner for each engineer. Because the system analyzes repository context and may run multiple agents in parallel, it can be resource-intensive. Pricing models for enterprise AI review services are often usage-based and vary with code complexity; organizations should evaluate ROI in terms of reduced review cycle time, fewer production incidents, and developer hours saved.

Practical adoption tips

- Start with a subset of teams or repositories to benchmark review speed and defect reduction.

- Calibrate severity thresholds to match your organization’s risk tolerance.

- Pair automated review with human oversight for complex architectural changes.

What are the limitations and security considerations?

AI code review is a powerful assistant but not a replacement for human judgment. Limitations and risks include:

- False positives and negatives: No automated system is perfect; reviews should be audited until teams gain confidence in accuracy.

- Resource cost: Multi-agent analysis can increase compute and latency compared with simple linters.

- Data handling: Enterprises must validate how source code and metadata are processed to meet compliance and IP policies.

- Security depth: Basic checks catch many issues, but deeper security analyses may require specialized scanning tools or manual threat modeling.

Combining AI review with dedicated security tooling and internal playbooks can reduce risk and make automated findings more actionable.

How teams should adopt AI-powered code review

Adoption is most effective when engineering leaders treat AI code review as an augmentation to existing processes. Recommended steps:

- Run a pilot: Select one or two repositories with representative workloads.

- Measure baseline metrics: Track pull-request cycle time, reviewer workload, and post-merge incidents.

- Configure policies: Enable default checks and add custom rules for internal concerns.

- Train developers: Share how the system explains issues so teams trust and act on findings.

- Iterate: Tune severity, false-positive suppression, and integration points.

For teams exploring adjacent topics like agentic coding workflows and enterprise AI agents, see our prior coverage of agentic coding automations and Anthropic enterprise agents. For technical context about large context windows and multi-agent teams, read our piece on Anthropic Opus 4.6 and agent teams.

Will AI code review replace human reviewers?

Short answer: no. AI code review is designed to shift the nature of human review toward higher-level concerns. Humans will continue to be essential for architectural decisions, design trade-offs, and nuanced security judgments. AI review reduces friction on routine logic and safety checks so humans can focus on strategy and complex problem-solving.

Checklist: Is your team ready to adopt AI code review?

- Do you have reproducible CI/CD processes and accessible pull-request metadata?

- Can you pilot across a small set of repositories?

- Is there executive and engineering sponsorship to tune policies and act on findings?

- Have you planned for developer training and feedback loops to reduce false positives?

Conclusion

AI code review represents a pragmatic next step for organizations that are rapidly adopting AI-assisted development. By focusing on logic-first feedback, multi-agent analysis, and tight integration with existing workflows, these tools can reduce review bottlenecks, cut the number of high-impact bugs, and free human reviewers to concentrate on architecture and design.

Call to action

If your engineering organization is scaling AI-assisted development or experiencing a surge in pull requests, consider piloting an AI code review solution to measure impact on cycle time, bug rates, and reviewer load. Want help evaluating options or designing a pilot? Contact our editorial team for guidance and case-study insights tailored to enterprise adoption.