Anthropic Opus 4.5: What the Release Means for Memory, Code and Agents

Anthropic’s Opus 4.5 marks a significant step in the evolution of large language models. The release combines substantial memory system changes with state-of-the-art benchmark performance and explicit product integrations aimed at real-world workflows. For teams building agentic systems, developer tools and long-context applications, Opus 4.5 introduces practical advances that change how models interact with codebases, spreadsheets and multi-agent orchestration.

What improvements does Anthropic Opus 4.5 bring?

Opus 4.5 centers on three overlapping advances: a redesigned approach to long-context memory, leading performance on coding and tool-use benchmarks, and features that enable agentic orchestration across sub-agents and documents. The combination targets both “thinking” capacity — how well the model reasons over extended context — and “working” capacity — how it manages active tasks and tools during multi-step workflows.

Performance highlights: benchmarks and coding gains

Opus 4.5 posts competitive, and in some cases category-leading, results across coding and problem-solving benchmarks. Key takeaways include:

- Breakthrough coding performance: Opus 4.5 achieved a milestone on SWE-Bench verified, becoming the first model reported to exceed an 80% verified score on that respected coding benchmark. That signals stronger code generation and understanding across realistic developer tasks.

- Robust tool use and reasoning: The model performs strongly on tool-driven benchmarks and multi-step problem sets, reflecting improved integration with external interfaces and a clearer internal representation of tool states.

- General problem solving: Gains on broad reasoning suites indicate Opus 4.5 is more reliable on complex, multi-stage challenges beyond simple retrieval or single-prompt responses.

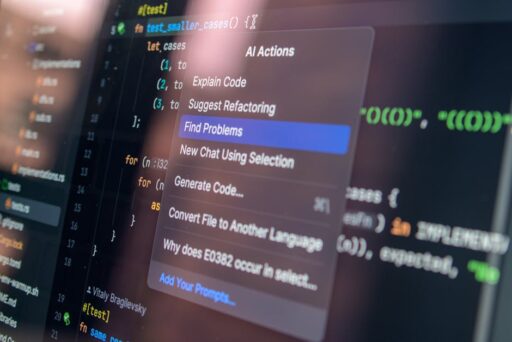

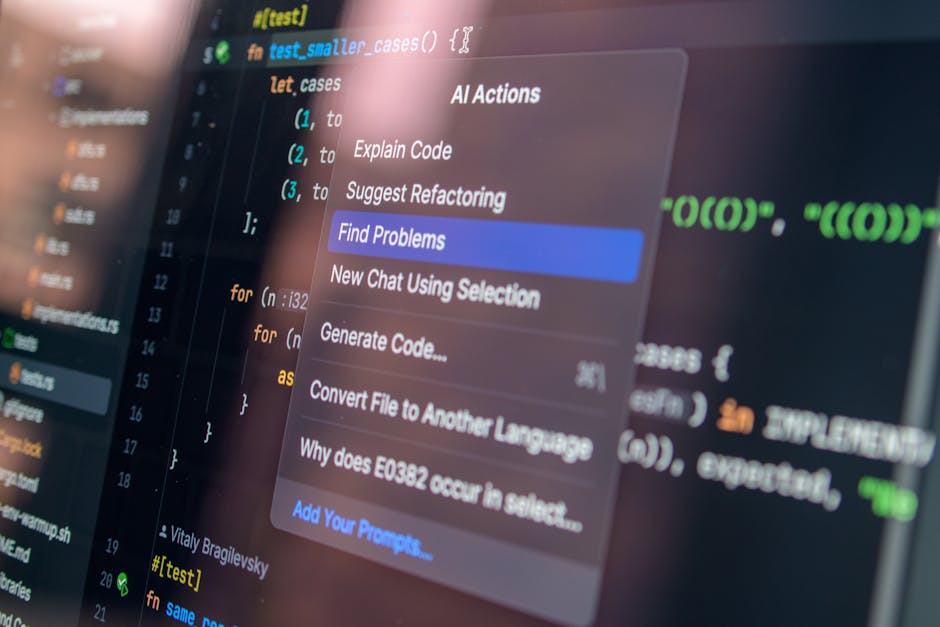

For product teams and engineers, the coding improvements mean fewer manual edits, higher-quality syntheses of code across files, and better automated assistance in IDEs and CI pipelines.

Memory redesign: why it matters for long-context and agents

Opus 4.5’s headline novelty is not merely a larger context window but a fundamental change in how the model manages what to remember and how to compress context. Instead of relying only on increasing token windows, Anthropic prioritized the quality of remembered details and introduced mechanisms to compress and retain essential state without breaking user interaction.

Two practical features that illustrate the redesign:

- Endless chat for paid users: chats can continue uninterrupted when the session reaches the model’s context limit. Instead of truncating or failing, Opus compresses older context into a condensed memory that preserves critical facts and timeline continuity for the user experience.

- Improved long-request quality: training changes elevate the fidelity of reasoning across long documents and code repositories, enabling the model to remember salient facts and refer back reliably without re-prompting.

Why “memory quality” beats raw window size

Longer context windows are helpful, but they can also introduce noise and inefficiency. The step Anthropic takes with Opus 4.5 focuses on:

- Prioritization: determining which tokens and facts matter to active tasks.

- Compression: reducing older context into compact, queryable summaries.

- Retrievability: preserving structure so the model can rehydrate compressed memories when necessary.

These capabilities are crucial when Opus is used as a lead agent supervising sub-agents, inspecting large codebases, or navigating multi-sheet spreadsheets where context relevance shifts dynamically.

For deeper background on memory systems in modern LLMs and how memory affects downstream apps, see our feature on AI Memory Systems: The Next Frontier for LLMs and Apps.

Agentic and tool-use capabilities: built for orchestration

Opus 4.5’s improvements deliberately target agentic use cases. Anthropic framed many upgrades to support scenarios where Opus acts as a coordinator — issuing commands to sub-agents, invoking tools, and re-evaluating tasks based on evolving state.

Key agentic strengths:

- Working memory that tracks sub-agent outputs, tool calls and critical checkpoints across workflows.

- Better interface handling for spreadsheets and developer tooling, which reduces brittle behavior when the model navigates structured files or external APIs.

- Product integrations (e.g., browser extension and Excel-focused capabilities) to demonstrate real-world utility of agentic workflows inside common productivity apps.

These enhancements reinforce a broader shift in the field from “single-prompt” assistants toward persistent, stateful agents that manage tasks across time — a theme we also analyzed in our coverage of next-generation agent capabilities in SIMA 2 and the limits of agent substitution in LLM Limitations Exposed.

How memory improvements change developer workflows

For engineering teams, the impact of better memory and agent orchestration is immediate and measurable. Benefits include:

- Reduced context reloading: engineers spend less time re-feeding the model with previously discussed specs, designs or relevant files.

- Smoother multi-file edits: the model can reason across entire repositories with fewer inconsistencies.

- Integrated tool chaining: Opus is better at sequencing API calls, running tests and updating results in context-aware ways.

- Persistent project memory: long-running projects retain continuity even as conversations span days or weeks.

Product availability and integrations

Anthropic paired Opus 4.5’s model release with pragmatic product moves intended to accelerate adoption in office workflows. Highlights include phased availability for browser and spreadsheet integrations designed to showcase the model’s tool-handling strengths:

- Browser extension rollout targeted to premium users to enable faster end-user access to Opus capabilities inside web workflows.

- Excel-focused model variants made available for Max, Team and Enterprise tiers to demonstrate spreadsheet automation, formula reasoning and multi-sheet analysis.

These product choices underline a recurring industry pattern: models that combine high-quality reasoning with tight product integrations tend to deliver immediate ROI for customers.

How Opus 4.5 stacks up against other frontier models

Opus 4.5 launches into a competitive landscape of frontier LLMs from multiple developers. While raw benchmark numbers are important, the distinguishing factors for enterprise adoption will be:

- Memory fidelity and how well a model preserves task-relevant context over long sessions.

- Tool and integration reliability when connecting to spreadsheets, IDEs and web-based tools.

- Safety and governance controls around persistent memory and agent coordination.

Opus 4.5’s emphasis on memory quality and agent orchestration positions it as a compelling contender for teams prioritizing robust multi-step automation and code-oriented workflows.

What enterprises should evaluate before adopting Opus 4.5

Organizations considering Opus 4.5 should weigh a short checklist to ensure the model fits their needs:

- Compliance and data handling: confirm how persistent memory interacts with sensitive data and audit trails.

- Toolchain compatibility: test integrations with internal CI/CD, ticketing systems and analytics tools.

- Operational cost vs. value: benchmark improvements in developer productivity and automation gains against inference cost.

- Failure modes: design fallbacks when compression or memory rehydration misses critical facts.

Is Opus 4.5 ready for mission-critical agent workloads?

Short answer: it’s closer than prior releases, but teams should still architect for graceful degradation. Opus 4.5 materially improves the odds that an agentic system can maintain continuity across complex tasks, inspect large documents and coordinate multiple sub-agents. However, relying entirely on compressed memories without verification increases operational risk, so hybrid approaches that combine model memory with deterministic logs and checkpoints remain best practice.

Recommended architecture for early adopters

- Use Opus 4.5 for high-level orchestration and reasoning while persisting authoritative state in a separate datastore.

- Validate critical facts via lightweight verification agents before committing irreversible actions.

- Instrument observability for memory compression events so human operators can audit decisions when needed.

Key takeaways

- Opus 4.5 advances the practical use of LLMs by focusing on memory quality as much as raw context size.

- Strong gains on coding and tool-use benchmarks make Opus 4.5 particularly relevant for developer productivity workflows.

- Agentic features and product integrations demonstrate Anthropic’s strategy to move models from demos into persistent, task-oriented tools.

For readers tracking the memory and agent trends, our earlier analysis of long-term memory in LLMs is a helpful primer: AI Memory Systems: The Next Frontier for LLMs and Apps. For context on agent capabilities and where they are most useful today, see our coverage of next-gen agent frameworks in SIMA 2 and our investigation into agent limitations in LLM Limitations Exposed.

Next steps: how to evaluate Opus 4.5 for your team

Practical advice for technical leads and product managers:

- Run representative, end-to-end workflows that include tool calls and multi-file reasoning.

- Measure error recovery when the model compresses and rehydrates older context.

- Test the model as a coordinator in small multi-agent setups to identify brittle interactions early.

These evaluations will reveal whether memory improvements translate into measurable decreases in follow-up prompts, time-to-complete tasks and manual corrections.

Conclusion and call to action

Anthropic Opus 4.5 is a meaningful release: it reframes the conversation from purely larger context windows to smarter memory management and agent-ready behavior. For engineering teams focused on code, spreadsheets and long-running workflows, Opus 4.5 offers immediate advantages — provided teams pair the model with robust verification and observability patterns.

Want to stay ahead? Subscribe to Artificial Intel News for in-depth analysis, hands-on evaluation guides and early reads on frontier models. If you’re evaluating Opus 4.5 internally, run the checklist above, pilot agentic workflows with safety rails, and share results with the community so teams can refine best practices together.

Get started: subscribe to our newsletter, read our memory systems deep dive, and join the conversation on agent design patterns for production AI.