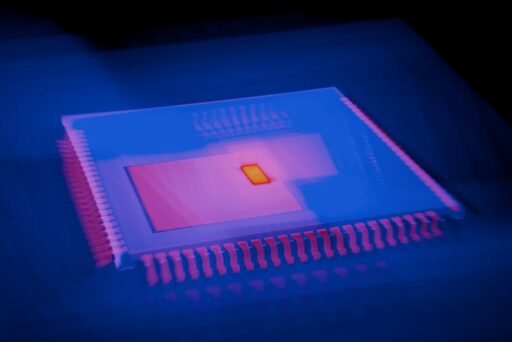

AI Chip Design: How Models Accelerate Semiconductor R&D

The semiconductor industry has long been defined by long development cycles, complex verification pipelines, and staggering design costs. Advanced chips today can contain tens to hundreds of billions of transistors, pushing human engineers and traditional EDA (electronic design automation) tools to their limits. AI chip design—applying machine learning models directly to the tasks of architecture exploration, layout, synthesis and verification—promises to change that dynamic by enabling faster iteration, lower cost and more creative exploration of the design space.

What is AI chip design and how can it accelerate chip development?

AI chip design refers to a suite of techniques that use machine learning models (including transformers, graph neural networks and reinforcement learning agents) to augment or automate steps in semiconductor design. These systems can assist with:

- Architecture-level exploration and trade-off analysis

- RTL-to-logic synthesis, placement and routing guidance

- Timing closure and power optimization

- Verification, test-case generation and bug triage

By automating repetitive tasks and surfacing optimized configurations, AI-driven workflows can compress design iteration loops—often the most time-consuming phase of chip development—so teams can try more alternatives within the same calendar window.

Why the timing is right for AI-assisted semiconductor design

Several trends converge to make AI chip design practical now:

- Model capacity and compute make it feasible to train specialized models on design artifacts and simulation outputs.

- Open architectures like RISC-V provide shareable design substrates that accelerate experimentation and dataset creation.

- Cloud and on-prem compute improvements permit secure, partner-hosted training that protects IP while enabling model specialization.

Startups and incumbents alike are investing in systems that bring software-style productivity gains to hardware teams. Some companies claim significant reductions in cost and development time by combining proprietary datasets, synthetic data generation, and secure on-prem training pipelines.

How domain-specific models differ from general-purpose LLMs

General-purpose large language models excel at text and code generation but lack domain knowledge of VLSI constraints, layout rules, and SPICE-level behavior. Effective AI chip design requires models trained or fine-tuned on:

- Schematic and netlist representations

- Layout and GDSII-like formats

- Timing, power and thermal simulation traces

- Place-and-route histories and verification logs

Building these datasets is challenging because semiconductor IP is closely guarded. Successful teams combine licensed datasets from partners, synthetic data generation, and secure systems that allow chipmakers to train on their proprietary data without exposing it publicly.

How do AI models actually assist designers?

AI systems can be embedded at multiple points in the design flow:

1. Architecture exploration and tradeoff analysis

Models can generate and rank candidate microarchitectures based on objectives like area, power, and performance, enabling rapid evaluation of hundreds of variants before committing to layout.

2. RTL and logic synthesis guidance

Machine learning can suggest RTL refactors, macro choices, or pipeline adjustments that improve timing or utilization without manual trial-and-error.

3. Placement and routing assistance

Graph-based models and optimization agents can recommend placement strategies and routing adjustments that reduce congestion and improve timing closure.

4. Verification, bug triage and testcase generation

AI can prioritize failing scenarios, propose root causes, and generate test vectors to accelerate verification cycles, reducing the expensive hand-off between engineers and verification teams.

What are the main technical approaches?

Teams building AI for chip design typically combine several model classes and techniques:

- Transformer-based sequence models for code-like artifacts (RTL, scripts)

- Graph neural networks for netlist and connectivity reasoning

- Reinforcement learning for placement and routing policies

- Differentiable surrogate models and learned performance predictors to speed simulations

Those approaches are not mutually exclusive—practical systems orchestrate them, using one model to propose candidates and another to predict performance, with human engineers in the loop for validation.

Can AI actually reduce cost and time at scale?

Companies in this space report multi-fold improvements in both cost and development time. Claimed benefits often include:

- Steeper design exploration: enabling dozens or hundreds of viable alternatives early in the process

- Faster verification cycles through automated testcase generation and prioritization

- Lower engineering hours spent on repetitive layout and tuning tasks

For chip programs that historically take 3–5 years, cutting the design and verification phases by more than half can translate to major competitive advantages and material cost savings—especially when market windows shift quickly.

What challenges remain for AI in chip design?

Significant technical, business and safety challenges must be addressed before AI becomes a standard part of every EDA stack:

- Data availability and IP protection: training useful models requires access to many design examples and simulation outputs, which are often proprietary.

- Integration with EDA toolchains: AI recommendations must interoperate with industry-standard tools and flows.

- Physical reality gap: models that predict performance must be validated against silicon, where manufacturing variation and analog effects matter.

- Verification burden: AI-generated designs still need rigorous verification to meet safety, security and correctness standards.

Real-world experiments and early validation

Early pilots have shown promise. For example, academic hackathons and student projects using open-source architectures like RISC-V demonstrate how models can accelerate CPU microarchitecture and early RTL exercises. These controlled environments provide useful training and validation data without exposing commercial IP.

Commercial teams have also explored secure collaborations with chipmakers to fine-tune models on proprietary datasets on-site or within private enclaves. Those approaches aim to balance model effectiveness with the legal and business need to protect design IP.

Who are the competitors and collaborators?

The AI chip design market brings together established EDA vendors, cloud and hardware incumbents, and a growing set of startups. Incumbent EDA companies remain central because of their deep integrations with manufacturing flows and verification tools. At the same time, new entrants are building specialized stacks that integrate ML models directly into design workflows and partner with foundries and IP licensors.

Investors are responding: venture capital flows into AI infrastructure and semiconductor automation have accelerated, reflecting the growing recognition that productivity gains in hardware design can unleash new classes of products and cost savings.

What does this mean for engineers and organizations?

AI tools are unlikely to replace experienced chip designers anytime soon. Instead, they will change the nature of the work by automating repetitive tasks, flagging risky design choices earlier, and enabling engineers to focus on higher-level architecture and system trade-offs.

Practical adoption paths include:

- Using domain-specific models to augment verification workflows

- Embedding learned predictors into early-stage architecture exploration

- Securing pilot projects that validate model recommendations against silicon or detailed simulation

How vendors and startups are addressing data and security

Because chip design data is sensitive, leading teams invest in secure training pipelines and partner agreements that allow model fine-tuning without exposing IP. Techniques include federated learning, secure enclaves, and strict access controls for datasets and model weights. Synthetic data generation—creating realistic but non-sensitive examples—also plays a role when access to proprietary artifacts is limited.

What should executives consider before investing in AI design tools?

Executives evaluating AI chip design investments should consider:

- Integration risk: how easily the tool will slot into existing EDA flows and compute infrastructure

- Data strategy: whether you can provide the training data needed or benefit from synthetic/licensed datasets

- Verification and qualification: the plan to validate model outputs against silicon or high-fidelity simulation

- Vendor roadmap and partnerships: ecosystem support from foundries, IP vendors, and EDA players

Companies that combine secure data access, model specialization, and tight EDA integration are most likely to deliver measurable ROI in the near term.

Further reading and related coverage

For readers interested in adjacent infrastructure and model trends that impact hardware development, see our coverage of advances in AI inference hardware and cloud stacks that enable large-scale model training and deployment. For example, discussions of AI inference chips and infrastructure spending illuminate where compute capacity and investment are converging to support these new design tools. Learn more about related industry developments in these posts:

- Rebellions Raises $400M for AI Inference Chips Growth — on investments in inference silicon

- On-Device AI Models: Edge AI for Private, Low-Cost Compute — on edge deployment and private models

- Forge Custom Enterprise AI Models: Train on Your Data — on secure, domain-specific model training

Next steps: how teams can pilot AI chip design today

If your organization is ready to experiment, a pragmatic pilot approach is:

- Identify a bounded design problem with measurable objectives (timing closure, power reduction or area savings).

- Secure necessary datasets — use open-source designs, synthetic data, or partner agreements for private data access.

- Run a short, time-boxed evaluation that measures model recommendations against baseline workflows.

- Validate outputs with high-fidelity simulation or a silicon shmoo, and iterate on integration into EDA flows.

Small, well-scoped pilots lower risk and accelerate learning. Over time, successful pilots can be expanded into tighter integrations that permanently shorten design cycles and reduce engineering costs.

Conclusion: a measured revolution

AI chip design is not a magic bullet, but it represents a meaningful shift in how semiconductor teams explore and validate complex designs. By combining domain-specific models, secure training approaches, and close integration with existing EDA flows, AI can reduce repetitive work, surface better trade-offs, and accelerate time-to-market. For engineers and executives alike, the opportunity is to adopt pilot programs that prove the value of AI on real design problems while safeguarding IP and verification rigor.

Call to action

Curious how AI chip design could accelerate your roadmap? Subscribe to Artificial Intel News for ongoing coverage, practical guides, and case studies that help hardware teams pilot and scale AI-assisted design. Read our latest analysis and sign up for updates to stay ahead of the curve.