Meta Compute: Scaling AI Infrastructure for the Future

Meta has announced Meta Compute, a comprehensive effort to expand the company’s AI infrastructure, datacenter footprint, and energy capacity. The program signals Meta’s commitment to building in-house compute and coordination capabilities at scale, with stated plans to add tens of gigawatts of electrical capacity this decade and potentially hundreds of gigawatts over time. This is a landmark moment: AI product quality now depends as much on underlying infrastructure as on models and algorithms.

What is Meta Compute and why does it matter?

Meta Compute is Meta’s strategic program to design, build, and operate a global, AI-first computing platform. It bundles investments in physical datacenters, power generation and procurement, silicon strategy, software stacks, and developer productivity into a single coordinated initiative. The goal is to create durable competitive advantages for training and serving increasingly large generative AI models and to support AI-powered features across Meta’s family of apps and services.

Key pillars of the initiative

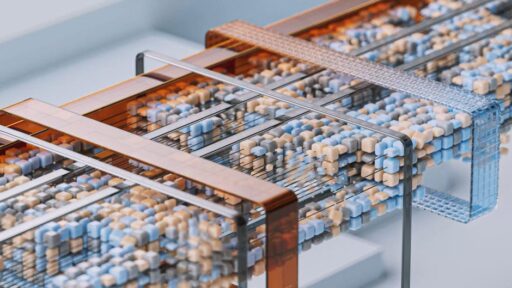

- Physical infrastructure: Expanding datacenter capacity and networks to host racks of accelerators and storage.

- Energy strategy: Sourcing or building large-scale power solutions to meet skyrocketing compute demand.

- Silicon and hardware: Coordinating silicon acquisition and co-design programs to optimize cost and performance.

- Software and orchestration: Developing the stack that schedules, trains, and serves models efficiently.

- Supply chain and partnerships: Building supplier relationships and financing models for rapid scale-up.

These pillars reflect an understanding that building leading AI capabilities is not only a software challenge but an integrated hardware, power, and systems engineering effort.

Who’s leading Meta Compute and what will they do?

Meta has appointed senior executives to lead the initiative. The leadership structure spans technical architecture, capacity planning, supplier partnerships, and government relations:

- Santosh Janardhan: Responsible for technical architecture, the software stack, a silicon program, and global datacenter operations. This role ties together engineering execution with the physical fleet that will host Meta’s AI workloads.

- Daniel Gross: Leading long-term capacity strategy, supplier partnerships, industry analysis, planning, and business modeling. This position focuses on forecasting demand and organizing the commercial relationships that underpin scale.

- Dina Powell McCormick: Focused on government engagement, public financing, and cross-sector partnerships to enable large-scale deployment and investment.

Together, these leaders form a blend of engineering, strategy, and policy expertise designed to move the program from concept to global scale.

How big is the energy challenge?

Meta has signaled plans to expand its energy footprint by multiple tens of gigawatts this decade. To put that in perspective, a gigawatt represents one billion watts—enough to power a large city’s entire daytime energy needs in some regions. Scaling compute to support next-generation AI models will drive substantial increases in electricity demand for running GPUs, specialized accelerators, cooling systems, and supporting infrastructure.

Key energy implications include:

- Sourcing clean energy: Meeting sustainability commitments while securing reliable power will require on-site generation, long-term power purchase agreements, and grid investments.

- Grid impact: Rapid additions of tens of gigawatts raise questions about transmission, local grid upgrades, permitting, and community impacts.

- Cost management: Energy is a major operating expense for large-scale compute; optimizing for cost and carbon will be a core engineering task.

These dimensions make energy strategy a first-order business and policy priority for any company operating at hyperscale.

How does Meta Compute fit into the industry landscape?

There’s an industry-wide scramble to create generative AI-ready cloud and on-premise environments. Hyperscalers, cloud providers, and chipmakers are all vying to offer the best combination of scale, cost, and performance. Meta’s approach is notable because it doubles down on building a vertically integrated platform to support both internal product innovation and operational advantage.

For broader context on infrastructure spending and sustainability debates, see our coverage of whether AI infrastructure spending is a sustainable boom: Is AI Infrastructure Spending a Sustainable Boom?. To understand where industry trends are heading next year and beyond, read our analysis of the major AI trends shaping 2026: AI Trends 2026: From Scaling to Practical Deployments.

Competitive implications

Vertical integration can reduce unit economics for training and inference, but it also requires significant upfront capital and long-lead engineering work. Meta’s move is intended to create durable cost and performance advantages that support differentiated product experiences—especially for compute-intensive features such as multimodal models, real-time inference across billions of users, and large-scale personalization.

What technical challenges will Meta face?

Scaling an AI platform to this magnitude involves complex technical trade-offs:

- Software orchestration: Scheduling thousands of distributed training jobs with efficient resource utilization and fault tolerance.

- Data locality and bandwidth: Ensuring models can access massive datasets with low latency and high throughput.

- Silicon procurement and co-design: Managing supply chains for accelerators and exploring custom silicon options.

- Cooling and thermal management: Designing systems to keep high-density racks operating within safe temperature envelopes.

- Operational scale: Running, maintaining, and upgrading a global fleet of datacenters while minimizing downtime and cost.

Each of these areas requires both deep technical expertise and tight cross-functional coordination across engineering, facilities, procurement, and finance.

What are the policy and community considerations?

Large-scale infrastructure projects intersect with local permitting, labor markets, and energy policy. Meta will need to engage with governments and communities to address grid impacts, land use, environmental review, and workforce development. These issues are why the initiative includes senior leadership focused on government relations and financing: new datacenters and energy projects often require public-private coordination to proceed at speed and scale.

Expect regulatory scrutiny on two fronts:

- Energy and environmental regulation: How new power demands affect emissions targets, renewable procurement, and grid stability.

- Competitive and data policy: Debates about vertical integration, market power, and whether control of massive compute capacity creates competitive barriers.

How will Meta Compute change product development?

Access to abundant, optimized compute can accelerate product cycles. Teams can iterate faster, train larger and more capable models, and deploy features that require low-latency inference at scale. The knock-on effects include:

- Faster prototyping of multimodal and personalized experiences.

- Lower marginal cost for inference-driven features.

- Potential for novel services that combine compute and data in new product tiers.

However, democratizing advanced features across billions of users while controlling costs will require engineering innovations in model efficiency, distillation, and adaptive serving techniques.

What are the risks and potential downsides?

Meta’s initiative is strategic but not without risk. Key challenges include:

- Capital intensity: Building datacenters and power capacity requires enormous upfront investment and long payback horizons.

- Energy dependency: Over-reliance on grid availability or volatile energy markets could increase operating risk.

- Talent and execution: Scaling operations and coordinating across hardware, software, and facilities is operationally demanding.

- Regulatory pushback: Local communities and regulators may slow permitting or impose conditions that raise costs or delay timelines.

Mitigating these risks will require disciplined project management, diversified energy strategies, and proactive stakeholder engagement.

How should competitors and partners respond?

For cloud providers, chipmakers, and enterprise customers, Meta’s announcement reinforces the need to accelerate their own plans for scalable compute, efficient architectures, and energy resilience. Partnerships on silicon, power procurement, and datacenter efficiency will become more strategic as companies seek to manage costs and performance trade-offs.

Organizations evaluating long-term AI strategies should consider:

- Investing in model efficiency and hybrid deployment patterns (cloud + edge).

- Pursuing partnerships for shared infrastructure and power agreements.

- Building teams with cross-disciplinary skills in hardware, software, and facilities engineering.

Frequently asked: What does Meta’s energy pledge mean for local grids?

Meta’s pledge to add tens of gigawatts could require significant grid upgrades, new transmission capacity, and coordinated planning with utilities. A realistic rollout will likely combine on-site generation, long-term renewable contracts, and investments in grid modernization. Localities hosting new capacity may see economic benefits, but also face increased demands on infrastructure and regulatory oversight.

Conclusion: Meta Compute is an infrastructure bet on the future of AI

Meta Compute represents a clear strategic bet: quality AI experiences increasingly depend on end-to-end control of compute, energy, and operational systems. If executed well, the initiative could yield cost advantages, faster product cycles, and differentiated capabilities. If execution falters, Meta faces capital, regulatory, and operational risks.

For readers tracking the broader market, this announcement is another signal that the next phase of AI competition will be fought as much in datacenters and on power grids as in model research labs. For deeper reads on related industry shifts, check our pieces on AI Trends 2026 and infrastructure sustainability in Is AI Infrastructure Spending a Sustainable Boom?

Key takeaways

- Meta Compute is a coordinated program to scale datacenters, power, silicon, and software for AI.

- The company plans to expand energy capacity by tens of gigawatts this decade, raising sustainability and grid questions.

- Success requires tight integration across technology, supply chains, and public policy.

Want to stay ahead of how infrastructure shapes AI product roadmaps? Subscribe to Artificial Intel News for ongoing analysis and updates on the companies building the compute backbone of tomorrow’s AI.

Call to action: Sign up for our newsletter to receive timely analysis of AI infrastructure, policy, and product strategies — and get notified when we publish follow-up reporting on Meta Compute.